Industrial Benchmarking Mistakes That Skew Performance Data

Time

Click Count

Industrial Benchmarking can sharpen strategic decisions, but a few overlooked mistakes can distort performance data, inflate confidence, and misguide investment. For enterprise decision-makers navigating precision manufacturing, quality control, and advanced sensing technologies, understanding where benchmarking fails is as critical as knowing what to measure. This article examines the most common errors that compromise comparability, compliance, and actionable insight.

Why benchmarking errors matter differently across business scenarios

Industrial Benchmarking is often treated as a universal management tool, yet its risk profile changes dramatically from one application scenario to another. A sourcing team comparing optical sensors for a new production line is not facing the same consequences as a quality director validating a non-contact inspection system for a regulated environment. In one case, a skewed benchmark may cause overspending. In another, it may trigger compliance gaps, false pass rates, customer claims, or delayed ramp-up.

For enterprise decision-makers, the real issue is not whether benchmarking should be used, but whether the benchmark reflects the operating reality of the intended use case. In advanced manufacturing, semiconductor packaging, electronics test, aerospace metrology, and environmental monitoring, performance data can look strong on paper while failing under actual process conditions. This is where many Industrial Benchmarking programs go wrong: they prioritize headline metrics over scenario fit, lab performance over operational repeatability, and vendor narratives over traceable evidence.

Organizations such as G-IMS are valuable in this context because they frame measurement systems through standards, use conditions, and cross-technology comparability. For leaders managing precision investments, the question should always be: benchmarked against what, under which conditions, for which operational objective, and with what decision consequence?

Typical scenarios where Industrial Benchmarking is used—and where mistakes begin

Industrial Benchmarking commonly appears in five enterprise scenarios. Each one has a different definition of “good performance,” which is why a single benchmark model rarely works across all decisions.

The table shows why Industrial Benchmarking should never be reduced to a vendor scorecard. A benchmark must align with the operational context, the governing standards, and the decision window. If these are not defined upfront, performance data becomes directionally interesting but strategically dangerous.

Scenario 1: Procurement comparisons that reward the best brochure, not the best fit

In procurement, one of the most frequent Industrial Benchmarking mistakes is comparing systems across inconsistent baselines. Decision-makers may review resolution, speed, throughput, detection accuracy, or frequency range without checking whether the systems were tested with identical parts, sample counts, ambient conditions, software versions, operator skill levels, or calibration status.

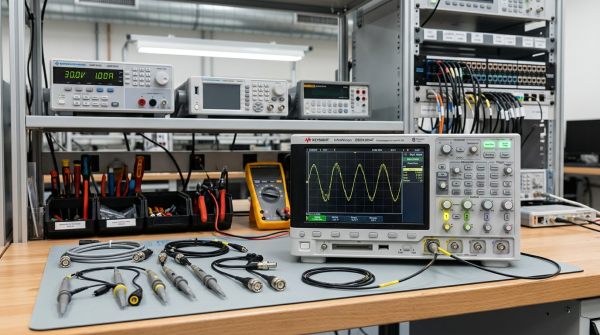

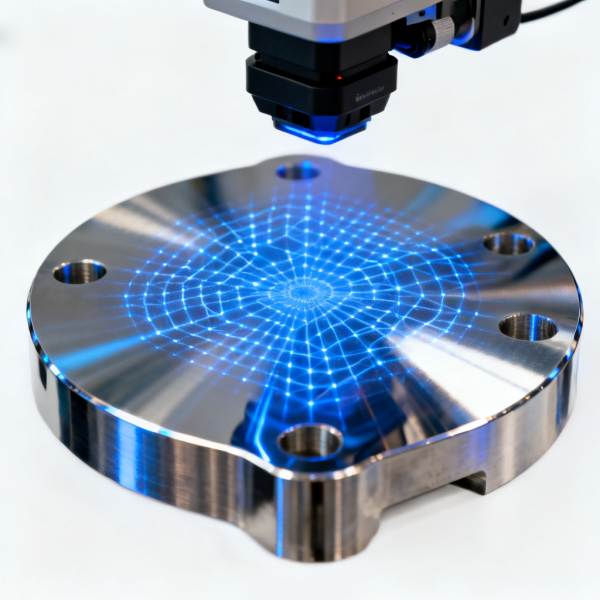

This is especially common in metrology, industrial optics, electrical test, and machine vision procurement. For example, a 3D scanning system may demonstrate excellent throughput in a clean demo environment but slow down significantly on reflective surfaces or complex geometries. A spectrum analyzer may look superior in peak specifications yet underperform in the actual signal environment relevant to 6G-ready validation or high-frequency manufacturing diagnostics.

The practical fix is to benchmark against the use case, not the category. Build a test protocol around real parts, defect types, pass/fail criteria, uptime expectations, and operator constraints. Enterprise buyers should also separate core capability from deployment readiness. A technically strong system with weak service support, limited traceability documentation, or poor software integration can still be the wrong strategic choice.

Scenario 2: Quality and process teams that benchmark averages while ignoring variation

For quality directors and process engineers, the most damaging benchmarking error is overreliance on average performance. In many precision environments, mean accuracy is less important than repeatability, reproducibility, drift behavior, and outlier stability. A system that performs well on average but produces inconsistent edge-case results can undermine a zero-defect objective.

This matters in applications such as AI-driven CMM inspection, automated vision inspection, electrical integrity testing, and specialized sensor validation. If a benchmark report presents only average deviation, average cycle time, or average pass rate, it may conceal instability under temperature shifts, operator changes, part variation, or production speed fluctuations. Industrial Benchmarking that excludes statistical dispersion often creates false confidence during scale-up.

Decision-makers should insist on scenario-based performance ranges: best case, nominal case, and stressed case. Ask for GR&R studies, uncertainty budgets, false positive and false negative rates, recalibration intervals, and drift curves over time. In regulated or high-value production, variation is not a side note; it is often the benchmark that matters most.

Scenario 3: Cross-site benchmarking that ignores operational maturity differences

Many global organizations use Industrial Benchmarking to compare plants, labs, or suppliers across regions. The goal is usually sound: identify best-performing sites, standardize methods, and improve network-wide productivity. The mistake begins when leaders assume the same KPI means the same thing everywhere.

A site with strong measurement discipline, ISO/IEC 17025-aligned calibration routines, stable environmental controls, and trained operators will naturally produce more reliable data than a site using the same nominal equipment without equivalent controls. Comparing their outputs directly may rank one site as “better” when the real difference is process maturity, not intrinsic capability.

This problem is common in multinational quality systems, centralized procurement reviews, and operational excellence programs. Benchmarking throughput, defect escape rates, or equipment utilization without normalizing for measurement protocol, maintenance discipline, and local product mix can skew strategic allocation decisions. In practice, leaders should compare sites in tiers: same product family, same tolerance class, same calibration model, and similar staffing maturity. Otherwise, Industrial Benchmarking becomes a misleading scoreboard rather than a management instrument.

Scenario 4: Compliance-sensitive environments where technical performance is mistaken for traceable evidence

Another common mistake appears in compliance-heavy scenarios such as aerospace manufacturing, advanced electronics, safety-critical supply chains, and environmental monitoring. Here, organizations may benchmark instruments based on strong technical performance while overlooking documentation integrity, traceability chains, standard alignment, and audit defensibility.

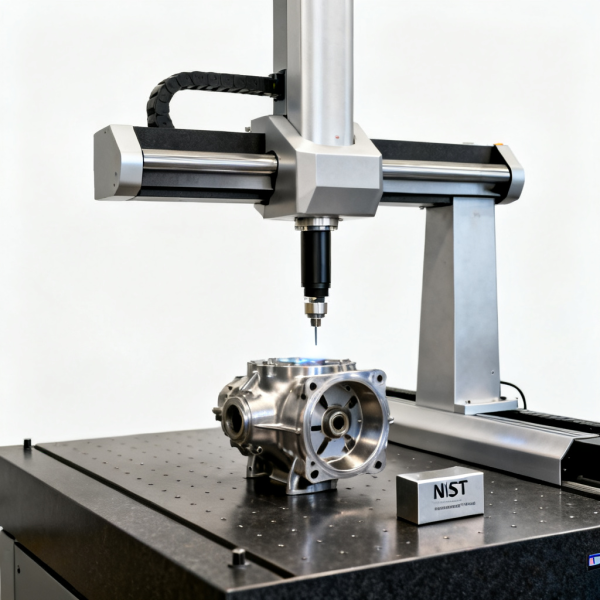

A vision inspection platform may detect micro-defects extremely well, yet if its decision logic is not documented, calibration history is incomplete, or uncertainty statements are weak, it may fail the governance test. Likewise, a trace-gas analyzer may show excellent detection sensitivity, but if benchmark comparisons ignore sample handling consistency or NIST-linked reference methods, the data may not be suitable for regulatory decisions.

In these scenarios, Industrial Benchmarking must include a compliance layer. Decision-makers should assess whether the benchmark supports internal quality systems, customer audits, and external regulatory expectations. Performance without traceability is useful for experimentation, but dangerous for formal acceptance.

The most frequent benchmarking mistakes by decision role

Because benchmarking decisions are cross-functional, the same data can be misread for different reasons. A role-based view helps prevent blind spots.

How to adapt Industrial Benchmarking to different operational needs

A robust benchmark begins by defining the decision context. Are you trying to reduce inspection escapes, compare suppliers, justify capital expenditure, standardize multi-site methods, or prepare for a compliance audit? Each scenario requires different evidence. A one-size-fits-all benchmark package usually produces shallow conclusions.

A practical approach is to structure Industrial Benchmarking around four filters. First, define the application scenario in business terms: ramp-up, qualification, procurement, audit readiness, or process optimization. Second, define the measurement reality: part types, defect classes, tolerances, environmental conditions, and operator model. Third, define the comparability rules: same standards, same sample logic, same pass/fail thresholds, same reporting format. Fourth, define the decision output: approval, shortlisting, process change, supplier escalation, or capital release.

For organizations evaluating advanced metrology, photonic sensors, high-frequency measurement platforms, or specialized environmental sensors, this discipline prevents two expensive mistakes at once: buying the wrong system and rejecting the right one for the wrong reasons.

Common signs your benchmark is already skewed

Enterprise teams should pause when any of the following signs appear during Industrial Benchmarking: vendor data cannot be reproduced internally; performance claims rely on undefined sample conditions; reports highlight best runs instead of full distributions; results from different sites use different calibration references; compliance language is vague; or stakeholders disagree on what success means. These are not minor documentation issues. They usually indicate that the benchmark is not decision-grade.

Another warning sign is when benchmark outcomes feel impressive but do not change confidence at the operational level. If a quality team still cannot predict false calls, if a plant team still does not trust cycle times, or if procurement still cannot estimate lifecycle risk, then the benchmark has measured activity without creating actionable insight.

FAQ: practical questions enterprise teams ask about Industrial Benchmarking

When is Industrial Benchmarking most reliable?

It is most reliable when the scenario, test conditions, standards basis, and decision objective are defined before data collection starts. Reliability improves when results include both average performance and variation under realistic operating conditions.

What should procurement teams verify first?

Verify whether all compared systems were tested on an equivalent basis. Then review lifecycle cost, calibration burden, software integration, service responsiveness, and documentation quality—not just the purchase price or peak specification.

Why do high-performing systems still fail in production?

Because lab benchmarks may not capture production noise, operator variability, environmental changes, product mix complexity, or workflow constraints. Industrial Benchmarking must reflect the environment where the system will actually create value.

A better decision path for enterprise benchmarking programs

The strongest Industrial Benchmarking programs are not the ones with the most data, but the ones with the clearest scenario logic. For enterprise decision-makers, the priority is to move from generic comparison to decision-fit comparison. That means defining the business scenario, identifying the operational stress points, normalizing evidence, and validating traceability before conclusions are escalated.

If your organization is comparing metrology platforms, optical sensors, electrical test systems, vision inspection tools, or environmental monitoring instruments, use benchmarking as a disciplined filter rather than a marketing scoreboard. The more precise the production environment, the more damaging small benchmarking mistakes become. Align benchmark design with the intended scenario, and performance data becomes not only comparable, but strategically usable.

Recommended News