When Electrical Test Equipment Starts Skewing Results

Time

Click Count

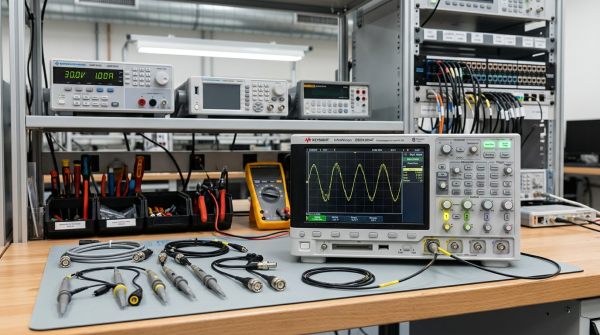

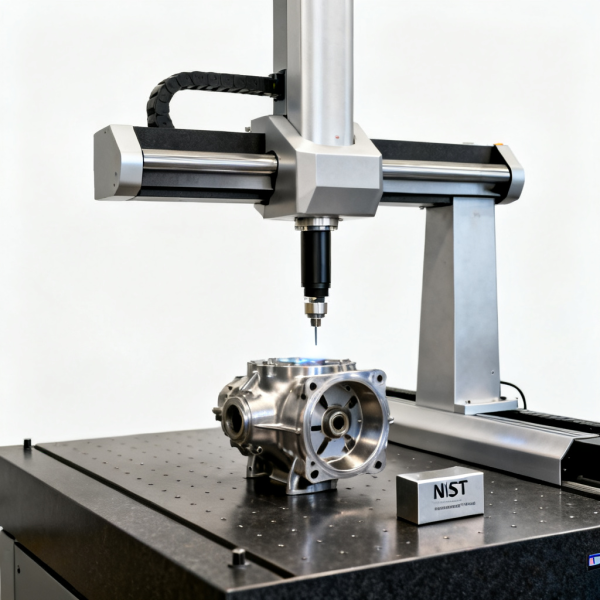

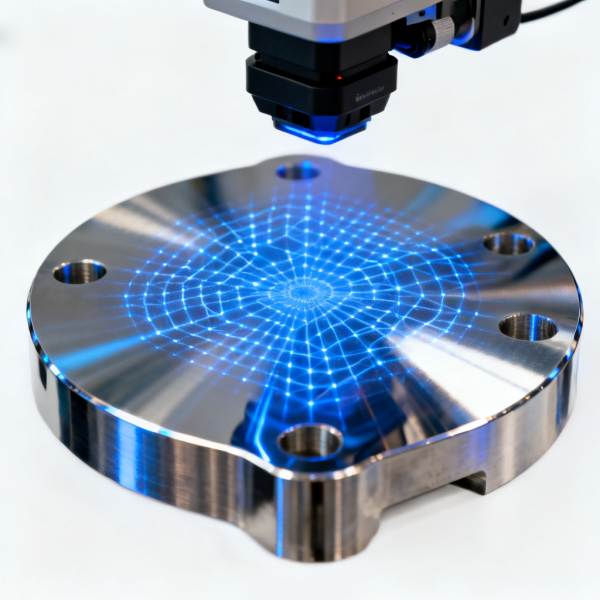

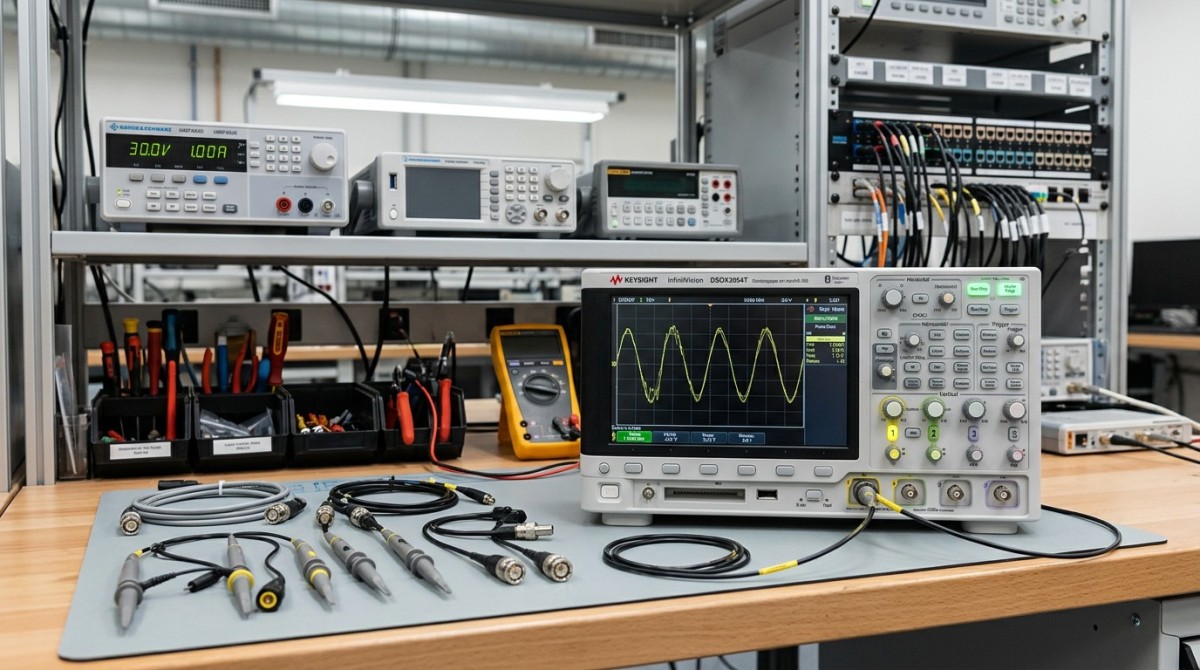

When Electrical Test equipment begins skewing results, the risk extends far beyond a single failed reading—it can distort quality decisions, delay production, and undermine compliance. For operators and research-driven buyers alike, understanding how calibration, NIST Standards for calibration, and related technologies such as Spectrum Analyzers for RF testing, advanced metrology solutions, and automated vision inspection systems work together is essential to restoring trust in measurement.

Why do electrical test results drift even when the device still powers on?

A test instrument can appear stable, pass a basic self-check, and still produce skewed results. In production, service labs, and R&D benches, this usually happens when the measurement chain has changed in small but cumulative ways. Temperature drift, worn probes, connector oxidation, fixture variability, firmware changes, grounding issues, and overdue calibration can all shift the final reading without causing an obvious fault alarm.

For operators, the first pain point is practical: a board passes on Line A and fails on Line B, or a spectrum trace changes between shifts. For information researchers and procurement teams, the problem is broader. If the instrument baseline is uncertain, every downstream decision becomes weaker, from root-cause analysis to supplier acceptance, incoming inspection, and release-to-ship judgment.

In mixed industrial environments, skewed results are rarely caused by one factor alone. A common pattern is 3 layers of deviation: the instrument itself, the test setup, and the surrounding environment. When these layers interact over 6–12 months of routine operation, the deviation can become visible only after reject rates rise, customer returns increase, or compliance audits request traceable evidence.

This is why G-IMS frames electrical test not as an isolated hardware issue, but as part of a larger intelligent-measurement system. Electrical Test & High-Frequency Measurement must be evaluated together with Advanced Metrology, Industrial Optics, and Non-Contact Vision Inspection Systems, especially where dimensional accuracy, RF behavior, and visual defect correlation affect the same product family.

Typical signs that electrical test equipment is skewing results

- Repeated measurements on the same DUT vary beyond the internal tolerance band, even though the operator follows the same setup steps.

- Golden samples no longer cluster around the expected reference point after 2–3 consecutive runs.

- Measurement drift appears after warm-up periods shorter than the recommended 15–30 minutes for sensitive RF and precision instruments.

- Pass/fail disagreement increases between electrical tests and vision inspection or dimensional metrology results.

These symptoms matter because skewed results do not only raise scrap or rework. They can also mask true defects. A false pass in aerospace electronics, semiconductor packaging, power modules, or high-frequency assemblies may create a risk that is more costly than a false fail. For this reason, buyers should treat traceability, calibration scope, and setup integrity as purchase criteria, not as after-sales details.

What should operators and buyers check first?

The fastest way to isolate skewed electrical test results is to separate the problem into a controlled checklist. Start with the simplest variables before assuming that the instrument requires immediate replacement. In many facilities, 4 checkpoints identify the main issue: calibration status, accessories and fixturing, environmental stability, and data correlation with another trusted method.

NIST Standards for calibration are especially important when teams need traceable reference points. A calibration certificate alone is not enough if the documented scope does not match the frequency range, voltage range, uncertainty requirement, or connector condition used in production. The key question is not “Was it calibrated?” but “Was it calibrated for the measurement that matters here?”

For RF workflows, Spectrum Analyzers for RF testing require even tighter discipline. Cable loss, adapter stack-up, attenuator wear, and reference level settings can affect readings across broad frequency spans. In many production scenarios, the instrument is only one part of the uncertainty budget; the full setup from probe tip to fixture interface must be reviewed every quarter or after any line reconfiguration.

G-IMS supports this evaluation by benchmarking instruments and measurement workflows against internationally recognized frameworks such as ISO/IEC 17025, IEEE practices, and NIST traceability logic. That multidisciplinary approach is valuable when the issue may cross domains, such as an electrical anomaly caused by connector geometry, thermal expansion, or assembly variation visible only under optical or vision-based inspection.

A practical first-pass troubleshooting sequence

- Verify calibration date, uncertainty statement, and whether the calibration range covers your actual test band or operating point.

- Inspect consumables and interfaces, including probes, test leads, connectors, fixtures, torque consistency, and shielding condition.

- Stabilize the environment for 30–60 minutes where needed, especially if temperature, humidity, vibration, or EMI conditions changed recently.

- Cross-check with a second method such as a known reference instrument, advanced metrology benchmark, or automated vision inspection correlation.

If one checkpoint resolves the discrepancy, the issue may be procedural. If all 4 checkpoints fail to explain the deviation, the next step is usually a deeper uncertainty review and controlled comparison test. This is where technical benchmarking becomes more valuable than generic service advice, because the goal is not only to repair the reading, but to restore confidence in the process window.

The table below helps operators and procurement teams classify the likely source of skewed electrical test results before they decide on recalibration, accessory replacement, or a broader system upgrade.

This classification prevents a common B2B mistake: replacing the instrument first and investigating the process later. In many cases, the root cause sits in the interface layer, not in the main unit. A structured check can save 1–2 procurement cycles and reduce unnecessary downtime.

How calibration, NIST traceability, and cross-domain measurement work together

Calibration is not a one-time administrative task. It is the basis for deciding whether today’s result is comparable to last quarter’s result, whether one production site can trust another site’s data, and whether an external audit trail will stand up to technical review. In electrical test, the strongest calibration practice connects instrument performance, uncertainty, and use-case relevance.

NIST Standards for calibration matter because traceability creates a recognized reference chain. However, traceability is valuable only when the measurement process remains controlled after calibration. If adapters are changed, fixtures are rebuilt, or test software updates alter averaging and sampling behavior, the traceable chain may still exist on paper while the effective test result has shifted in practice.

This is where G-IMS offers an advantage for industrial buyers. The organization’s five-pillar structure allows teams to compare electrical data with adjacent evidence. For example, a frequency-domain anomaly detected by Spectrum Analyzers for RF testing may align with a solder geometry issue found through 3D metrology, or with contamination detected by optical inspection. That cross-domain view is often missing from single-vendor troubleshooting.

A useful operating model is to review calibration and verification across 3 levels: annual or semiannual accredited calibration, monthly or quarterly in-house verification with reference artifacts, and per-shift setup checks for high-risk lines. The exact cycle depends on use intensity, environment, and product criticality, but the layered structure is broadly applicable across precision manufacturing and industrial electronics.

Key standards and control points buyers should understand

Before issuing an RF or electrical test purchase decision, many teams ask for a specification sheet and service quote. That is not enough. They also need to see how the supplier or benchmark source treats calibration traceability, uncertainty statements, environmental recommendations, and reference procedures. The table below summarizes the most relevant control points.

For procurement teams, this table clarifies a critical issue: compliance language and real-world measurement control are not the same thing. A credible solution should connect both. That is especially important when the instrument will support supplier qualification, regulated output, or zero-defect initiatives.

Where cross-domain verification pays off

If electrical test data is unstable, cross-domain verification can shorten diagnosis time by 2–4 weeks. A dimensional mismatch detected in a connector shell, a coplanarity issue identified by 3D scanning, or surface contamination seen in non-contact vision inspection may explain the electrical deviation faster than repeated instrument adjustment alone.

This is why sophisticated buyers increasingly look beyond a single instrument category. They want a measurement strategy, not just a replacement unit. G-IMS is positioned for that requirement because its benchmarking repository spans metrology, optics, RF, machine vision, and environmental sensing rather than treating each problem in isolation.

How to choose the right response: recalibrate, repair, upgrade, or redesign the workflow?

Not every skewed result justifies the same response. Some issues can be corrected with a controlled recalibration cycle in 7–15 days. Others point to recurring setup errors that require fixture redesign, operator retraining, or software lockout. And in high-frequency or high-throughput environments, an aging platform may no longer provide the stability, bandwidth, or data integration required for current production targets.

A good procurement decision starts by ranking 3 dimensions: criticality of the product being tested, frequency of the anomaly, and cost of a wrong decision. If the line supports safety-relevant electronics, aerospace modules, or advanced semiconductor nodes, the tolerance for uncertain results is much lower than in a basic maintenance environment. In these cases, temporary workarounds often create hidden risk.

Another important factor is interoperability. A modern electrical test solution should work with data logging, manufacturing execution systems, and adjacent inspection tools. If the current setup cannot correlate electrical results with visual or metrology data, the cost of diagnosis remains high even after recalibration. The total cost is therefore not only hardware price, but also investigation time, line interruption, and requalification effort.

For buyers comparing options, the most reliable path is often a benchmark-led review. Rather than choosing by brochure headline, compare uncertainty relevance, application range, verification workflow, support depth, and integration fit. G-IMS helps teams make that comparison with a technical and regulatory lens instead of a purely commercial one.

Decision guide for common response paths

The matrix below is useful when internal teams must decide whether to correct the current electrical test setup or move toward a broader upgrade. It combines operational urgency with procurement logic.

The key takeaway is simple: the cheapest short-term action is not always the lowest-cost decision. If a line loses several days each month to suspect data, or if audit preparation requires manual reconstruction of traceability records, the business case for a better measurement architecture becomes much stronger.

Procurement questions worth asking before committing

- Which parameters truly drive acceptance: voltage, current, frequency response, signal integrity, leakage, or cross-domain correlation?

- What is the expected calibration cycle: 6 months, 12 months, or a usage-based interval tied to production intensity?

- How long is the realistic implementation timeline, including setup validation, operator training, and internal requalification?

- Can the solution support future needs such as broader RF testing, automated reporting, or correlation with machine vision and metrology systems?

These questions prevent specification-driven overbuying and also prevent underbuying. In industrial settings, both errors are expensive: one wastes budget, the other preserves instability.

Common misconceptions, operational risks, and what to do next

One common misconception is that a valid calibration sticker proves valid production data. It does not. Calibration confirms performance under defined conditions and methods; it does not guarantee that today’s operator, fixture, cable set, ambient condition, and software configuration are all still under control. That gap is where many skewed electrical test results begin.

Another misconception is that electrical anomalies should be solved only by electrical teams. In advanced manufacturing, the defect mechanism may be mechanical, optical, thermal, or environmental. A connector with slight geometry variation, a coating issue, or a contamination event can create unstable electrical signatures that no amount of menu adjustment will solve. This is why a multidisciplinary intelligence source matters.

Operationally, the most important next step is to formalize a response path. Define 5 checks for every questionable result: reference sample retest, accessory inspection, environmental confirmation, settings review, and cross-method comparison. When this routine is documented and repeatable, false escalation drops and real failures become visible faster.

For organizations with tight delivery windows, the goal is not only to restore one instrument. It is to create a resilient measurement framework that supports quality, compliance, and procurement decisions over the next 12–24 months. That is especially true if the business is adding RF complexity, tighter tolerances, or higher audit exposure.

FAQ for researchers and operators

How often should electrical test equipment be calibrated?

A common interval is every 6–12 months, but the right cycle depends on use intensity, environmental stress, and the consequence of a wrong result. High-frequency measurement, harsh factory conditions, or critical product acceptance often justify tighter verification layers between formal calibrations.

Are Spectrum Analyzers for RF testing enough to confirm root cause?

Not always. They are essential for observing signal behavior, but RF symptoms can originate from physical defects, assembly variation, or contamination. Pairing RF analysis with advanced metrology and automated vision inspection often shortens troubleshooting and improves confidence.

What should procurement prioritize when replacing a suspect test platform?

Prioritize measurement relevance, calibration traceability, uncertainty clarity, setup repeatability, and integration with your workflow. Purchase price alone is a weak filter if recurring skewed results continue to cause rework, delays, or audit friction.

How long does it take to stabilize a problematic measurement workflow?

A focused troubleshooting and verification effort can often identify the main source within several days, while recalibration or a controlled upgrade may take 1–4 weeks depending on complexity, internal approvals, and whether cross-domain validation is required.

Why choose us for measurement benchmarking and next-step planning?

G-IMS helps industrial buyers and operators move from uncertain readings to actionable decisions. Our value is not limited to one product category. We benchmark across Advanced Metrology & 3D Scanning, Industrial Optics & Photonic Sensors, Electrical Test & High-Frequency Measurement, Non-Contact Vision Inspection Systems, and Environmental Monitoring & Specialized Sensors, so your team can evaluate root causes across the full measurement chain.

If you are dealing with skewed electrical test results, you can consult us on concrete issues: parameter confirmation, calibration scope review, NIST traceability expectations, Spectrum Analyzers for RF testing selection, comparison of upgrade versus recalibration paths, implementation timing, and cross-domain validation needs. We can also help structure evaluation criteria for internal procurement and quality review.

For teams under delivery pressure, we support decision-making around 3 practical areas: what to verify now, what to replace next, and what to redesign for long-term measurement stability. That includes guidance on typical workflow checkpoints, standard alignment, solution benchmarking, and the relationship between electrical results, geometry, optics, and environmental variables.

Contact us if you need support with product selection, calibration-related requirements, solution comparison, delivery-cycle planning, sample or application discussion, or quotation communication. A well-structured measurement decision today can prevent months of repeated troubleshooting later.

- Advanced Metrology

- 3D Scanning

- Industrial Optics

- Photonic Sensors

- Electrical Test

- Vision Inspection Systems

- Environmental Monitoring

- Specialized Sensors

- Spectrum Analyzers

- NIST Standards

- Electrical Test equipment

- NIST Standards for calibration

- Spectrum Analyzers for RF testing

- automated vision inspection systems

- advanced metrology solutions

Recommended News