NIST Standards and Calibration Drift: What Often Gets Missed

Time

Click Count

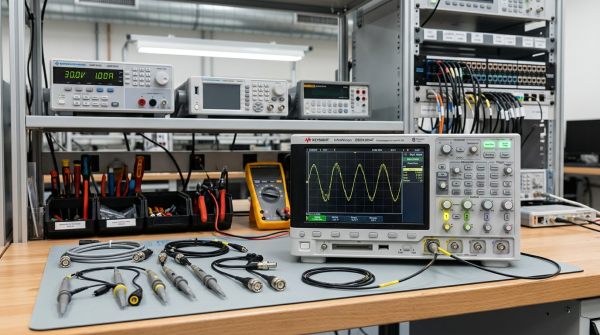

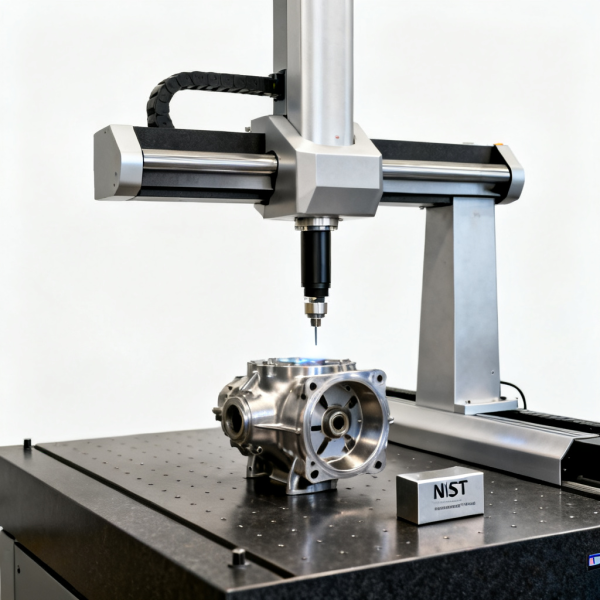

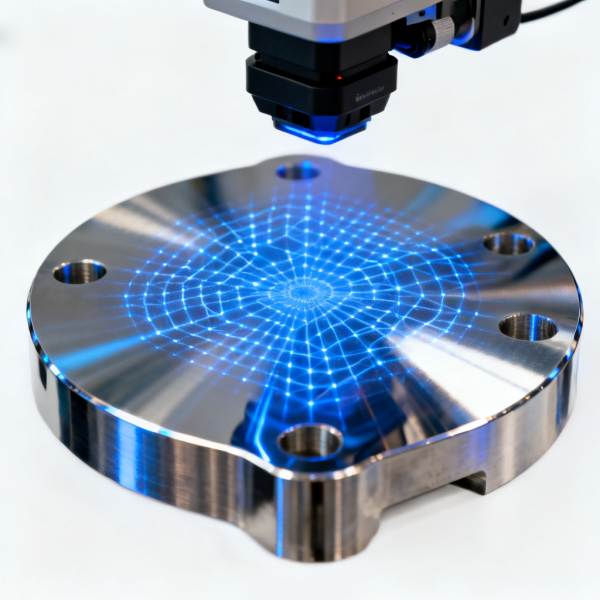

NIST Standards are widely treated as the benchmark for accuracy, yet calibration drift still undermines 3D Scanning, Electrical Test, Spectrum Analyzers, and Coordinate Measuring Machines more often than teams expect. For buyers, engineers, and quality leaders working with Sensory Technology, Industrial Sensors, Hyperspectral Imaging, and Environmental Monitoring systems, the missed issue is rarely calibration itself—it is how drift develops between intervals and escapes routine checks.

In high-precision operations, the question is not whether an instrument was calibrated 6 or 12 months ago. The more important question is whether the device remained stable after thermal cycling, transport shock, operator change, firmware updates, probe replacement, or sustained use across different shifts. A system can still hold a valid certificate and yet produce data that gradually moves outside the process tolerance window.

That gap matters across industries. A subtle frequency reference shift in a spectrum analyzer can distort pass/fail decisions in RF validation. A small axis error in a CMM can affect first article inspection. A drifted optical response in hyperspectral imaging can alter classification accuracy. For procurement teams and technical evaluators, understanding calibration drift is therefore a practical risk-control issue, not a paperwork exercise.

Why NIST Traceability Does Not Eliminate Drift Between Calibration Cycles

NIST traceability establishes a reference chain. It confirms that an instrument was compared against standards linked to recognized measurement references at a specific point in time. What it does not guarantee is unchanged performance for the next 90, 180, or 365 days. In real production and R&D environments, drift accumulates because instruments are physical systems exposed to vibration, aging, contamination, humidity, and handling variability.

This distinction is often missed in purchasing reviews. Teams may compare suppliers based on whether calibration is “NIST-traceable” and stop there. However, two instruments with the same traceability status can have very different drift behavior. One may hold stability within 0.02% over a year, while another may require verification every 30 days to maintain acceptable confidence in the data.

For quality managers, the operational concern is uncertainty growth between formal calibrations. The longer the interval, the more important it becomes to monitor intermediate performance. This is especially true when process tolerances are tight, such as sub-millimeter dimensional inspection, high-frequency signal validation above 6 GHz, or environmental sensing where threshold exceedance triggers compliance action.

A useful rule in industrial metrology is that calibration proves historical conformity, while drift monitoring protects current decisions. When organizations rely only on annual certificates, they may miss 3 to 9 months of degraded performance. That can lead to false rejects, false accepts, rework, delayed product release, and supplier disputes.

Common sources of drift that are underestimated

- Temperature variation of 5°C to 15°C between calibration lab conditions and factory floor conditions.

- Mechanical wear in probes, fixtures, stages, connectors, and cable assemblies after repeated cycles.

- Optical contamination from dust, oil mist, or lens film that alters signal quality in non-contact systems.

- Reference aging in oscillators, light sources, detectors, and gas-sensing elements over 3 to 12 months.

- Software and firmware changes that modify compensation tables, data filtering, or spectral processing logic.

Traceability versus stability in procurement reviews

Procurement specifications should not stop at “must be calibrated to NIST standards.” They should also define acceptable drift rate, intermediate verification method, environmental operating range, and recommended recalibration frequency. For example, a system used 16 hours per day in a production cell may need a shorter interval than the same model used once per week in a laboratory.

The table below clarifies the difference between a compliance-oriented view and an operational stability view. This distinction helps technical evaluators build better RFQs and avoid the common mistake of treating calibration status as the only indicator of measurement reliability.

The key takeaway is straightforward: NIST traceability is necessary, but it is not sufficient. Teams that buy high-value measurement systems should assess both the calibration pedigree and the expected stability profile under actual operating conditions.

Where Calibration Drift Shows Up First in Industrial Measurement Systems

Drift does not always appear as a dramatic failure. More often, it shows up first in secondary indicators: increased repeatability scatter, borderline pass/fail decisions, unexplained offsets between sites, or more frequent operator overrides. These early signs are easy to dismiss when teams focus only on whether the instrument still has a valid certificate.

In 3D scanning and coordinate measurement, drift may emerge as gradual scale error, probe qualification instability, axis compensation mismatch, or thermal frame distortion. A deviation of even 20 to 50 micrometers can be significant when tolerances are tight and parts must fit aerospace, semiconductor tooling, or precision medical assemblies. In these cases, the cost of unnoticed drift often exceeds the cost of recalibration.

In electrical test and spectrum analysis, drift can affect amplitude accuracy, frequency reference integrity, noise floor interpretation, and cable-path consistency. RF teams working across 3 GHz, 6 GHz, or higher bands know that connector wear, test port stress, and environmental instability can degrade confidence well before the next annual calibration event. The result may be inconsistent data across labs, vendors, and field service units.

Optical and environmental systems present another challenge. Hyperspectral imaging depends on stable illumination, detector response, and wavelength alignment. Gas analyzers and environmental sensors are sensitive to sensor poisoning, humidity cross-effects, and baseline shift. In these systems, drift often appears as classification errors, false alarms, or threshold bias rather than obvious instrument failure.

Early warning patterns by equipment type

The following comparison is useful for operators, service teams, and project managers building routine verification plans. It identifies where drift tends to show up first and what teams should monitor before formal recalibration becomes necessary.

What matters here is timing. By the time final results are clearly wrong, drift may already have affected dozens, hundreds, or even thousands of records. Early warning checks are therefore less about maintenance burden and more about protecting data credibility.

A practical threshold question

A useful operational screen is whether expected drift could consume more than 20% to 30% of the process tolerance before the next calibration date. If the answer is yes, the measurement plan needs intermediate controls. That principle applies whether the system is dimensional, optical, electrical, or environmental.

What Buyers and Technical Evaluators Should Specify Before Purchase

A strong procurement document does more than request calibration certificates. It defines how the instrument will be used, which drift risks are unacceptable, and what evidence the supplier must provide. This is especially important for enterprise buyers comparing multiple vendors that all claim compliance with recognized standards but differ in long-term stability and service support.

At minimum, buyers should ask for four categories of information: stated accuracy, stability over time, environmental sensitivity, and verification workflow. For example, if a vision or metrology system will operate across 18°C to 28°C, the quotation should explain whether the specification assumes controlled lab conditions or real plant-floor variation. If a spectrum analyzer will be moved between sites, post-shipping verification requirements should be clarified in advance.

Serviceability is equally important. Many drift issues are manageable if the vendor provides transfer artifacts, reference procedures, remote diagnostics, and turnaround guidance. But if recalibration requires overseas shipment and a 3 to 5 week downtime window, the operational risk becomes a supply continuity issue, not only a measurement issue. Project owners should calculate that risk before final selection.

For distributors and system integrators, this is also a margin-protection matter. Equipment with unstable field performance can generate returns, site revisits, and support escalation that erase the value of an attractive purchase price. A lower initial quote is not lower total cost if drift management is weak.

Five specification items that reduce hidden risk

- Define calibration interval and state whether it is fixed, usage-based, or condition-based.

- Request intermediate verification procedures with artifacts, reference devices, or built-in self-check logic.

- Clarify environmental limits, including temperature, humidity, warm-up time, and installation vibration conditions.

- Ask for measurement uncertainty or drift-related stability statements over 3, 6, and 12 months where applicable.

- Confirm service turnaround, spare strategy, and whether loaner units are available during recalibration.

Procurement checklist for high-value measurement assets

The table below can be adapted for RFQs, technical review forms, or vendor scorecards. It is designed for organizations that need to compare equipment not only on published accuracy, but also on lifecycle confidence and operational resilience.

The most effective procurement teams convert these points into acceptance criteria. That means drift control becomes measurable during supplier selection, not something discovered after installation.

How to Build a Drift-Control Program That Works in Daily Operations

A workable drift-control program does not need to be complex, but it does need discipline. The best programs combine scheduled calibration with routine verification, operator training, trend logging, and escalation rules. This layered approach is often more effective than simply shortening the annual calibration interval, because it addresses what happens every day, not only what happens once per year.

For most organizations, implementation can be structured in 5 steps. First, classify instruments by measurement criticality. Second, assign verification frequency based on tolerance sensitivity and usage intensity. Third, use stable references or artifacts for intermediate checks. Fourth, trend the results over time. Fifth, define trigger levels for maintenance, recalibration, or process hold. This model works across metrology, electrical test, optical inspection, and environmental sensing.

A common best practice is to separate critical tools into at least three tiers. Tier 1 instruments directly control release or compliance decisions and may need daily or per-shift checks. Tier 2 systems support process tuning and may be reviewed weekly. Tier 3 devices used for general monitoring may only need monthly verification. The exact interval depends on tolerance, environment, and use intensity, but the principle is consistent.

Data logging is essential. Even a simple spreadsheet or MES-linked trend chart can reveal whether a system is drifting linearly, seasonally, or after specific events such as relocation or maintenance. This allows engineering teams to move from reactive recalibration to predictive control. Over 6 to 12 months, those patterns often show whether the original interval was too long or unnecessarily short.

A practical 5-step implementation sequence

- Map every instrument to a process impact level: release-critical, process-critical, or reference-only.

- Select a verification artifact or reference with tighter uncertainty than the working instrument.

- Set check intervals such as daily, weekly, or every 100 operating hours.

- Define action limits, for example warning at 50% of allowable error and stop-use at 80% to 100%.

- Review trend data monthly and adjust interval, environment, or maintenance plan accordingly.

What operators should document every time

- Date, time, shift, and operator identity.

- Ambient temperature and humidity when relevant.

- Reference used and measured result.

- Any recent transport, maintenance, cable replacement, or software update.

- Corrective action taken if the reading exceeded the warning band.

When these controls are in place, calibration drift becomes observable instead of invisible. That improves not only compliance, but also yield protection, service planning, and confidence in cross-site comparisons.

Frequently Missed Questions About NIST Standards and Calibration Drift

Many search and purchasing decisions revolve around a few recurring questions. These questions matter because they reveal where teams often confuse calibration status with operational reliability. The answers below are relevant for technical researchers, quality leads, procurement managers, and distributors evaluating measurement technology portfolios.

Does a current calibration certificate mean the instrument is accurate today?

Not necessarily. A certificate confirms performance at the time of calibration under specified conditions. If the instrument has since experienced transport shock, contamination, component aging, or environmental stress, drift may have developed. That is why intermediate checks are important, especially for systems with high duty cycles or tight tolerance requirements.

How often should intermediate verification be performed?

There is no universal answer, but common ranges are daily for release-critical CMMs, weekly for RF instruments in active use, per-batch for optical imaging systems, and every 30 days for many environmental sensors. A good starting point is to estimate whether expected drift could consume more than 25% of tolerance before the next formal calibration. If yes, the check interval should be shortened.

What is the biggest procurement mistake?

Treating “NIST-traceable calibration” as the end of the technical evaluation. Buyers should also review stability, field verification methods, service turnaround, environmental sensitivity, and support for trend analysis. In many cases, those factors determine actual lifecycle value more than the initial certificate package.

Which teams benefit most from better drift control?

Quality teams gain stronger release confidence. Operators reduce reruns and troubleshooting time. Project managers avoid schedule disruption caused by hidden measurement issues. Procurement leaders make lower-risk sourcing decisions. Distributors and service partners reduce return visits and support costs. In short, drift control creates value across the full measurement chain.

NIST standards remain essential for credible calibration, but the missed issue is what happens after the certificate is issued. In modern industrial environments, measurement trust depends on how well an organization controls drift between intervals, across sites, and under real operating conditions. That is the difference between formal compliance and dependable decision-making.

For organizations comparing 3D scanning, CMM, spectrum analysis, hyperspectral imaging, industrial sensors, or environmental monitoring solutions, the most reliable path is to evaluate traceability, stability, verification workflow, and service readiness together. G-IMS supports that decision process with benchmark-driven technical insight for buyers, engineers, and quality leaders managing high-precision operations.

If you are reviewing new equipment, refining a calibration governance program, or building a vendor short list, now is the right time to ask deeper questions about drift risk. Contact us to discuss your application, request a tailored evaluation framework, or learn more about practical measurement solutions aligned with your operational and compliance goals.

Recommended News