When 3D Scanning Accuracy Fails on Reflective Surfaces

Time

Click Count

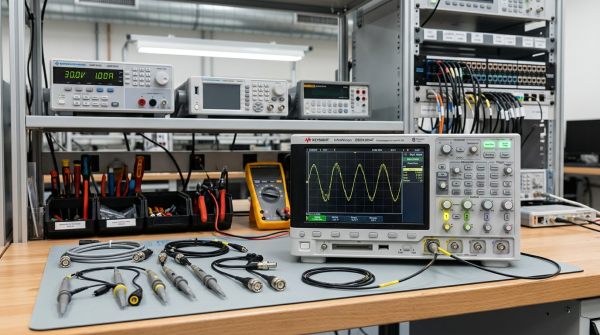

Reflective surfaces are one of the most common reasons a 3D scanning project delivers unstable data, false geometry, or failed inspection results. If you are evaluating scan quality for manufacturing, quality control, reverse engineering, or supplier qualification, the short answer is this: highly reflective parts do not simply make scanning “harder” — they can fundamentally distort how optical systems capture shape. For operators, that means rescans and process delays. For quality teams, it means unreliable dimensional evidence. For buyers and decision-makers, it means higher project risk unless scanner capability, part condition, workflow controls, and verification methods are all assessed together. This article explains why 3D scanning accuracy fails on reflective surfaces, what risks matter most, and how to reduce failure using practical metrology logic aligned with IEEE standards and NIST standards.

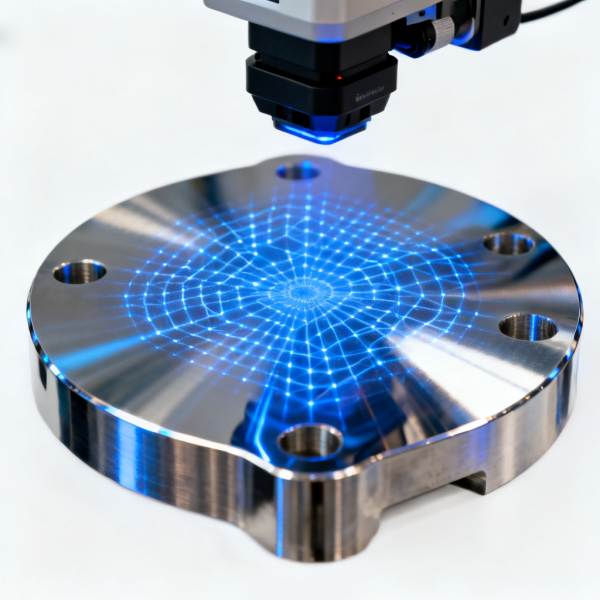

Why reflective surfaces break 3D scanning accuracy in the first place

Most optical 3D scanning systems depend on controlled light behavior. They project light, detect reflected patterns, and convert that information into surface geometry. Reflective materials interfere with that process because they do not return light in a stable, diffuse way. Instead, they can create glare, hot spots, multipath reflections, edge distortion, and missing point-cloud data.

In practical terms, the scanner may “see” light intensity rather than true geometry. That is why polished metal, chrome, glossy plastics, coated components, wafers, glass-like finishes, and mirror-treated parts often produce unreliable scans even when the scanner is marketed as high accuracy.

The root problem is not only the surface itself. Accuracy failure usually happens because of the interaction between four factors:

- Surface optical behavior: specular reflection overwhelms the sensor

- Scanner architecture: blue light, laser, structured light, or photogrammetric support each respond differently

- Environmental conditions: ambient light, vibration, angle, temperature, and part fixturing affect capture stability

- Workflow discipline: calibration, exposure settings, data alignment, filtering, and verification determine whether bad data is caught or accepted

For technical evaluators and procurement teams, this means a scanner specification sheet alone is not enough. Stated accuracy under ideal lab conditions does not guarantee usable results on reflective production parts.

What failure actually looks like in real inspection and production workflows

When 3D scanning accuracy degrades on reflective surfaces, the failure is not always obvious at first glance. A color map may still look acceptable. A mesh may still appear complete. But the underlying measurement integrity can be compromised.

Common failure modes include:

- False surface geometry: the scan shows a shape that does not physically exist

- Localized dimensional drift: some areas pass while reflective zones shift outside real tolerance

- Data voids and hole filling errors: software interpolates missing information, creating misleading surfaces

- Edge rounding: sharp features appear smoother or smaller than they are

- Registration instability: multiple scan passes align poorly because reference features are inconsistent

- Repeatability failure: repeated scans of the same part produce materially different results

For quality control teams, the most important question is not whether a scan can be generated, but whether the scan is traceable enough to support a measurement decision. In regulated, high-precision, or customer-audited environments, that distinction matters.

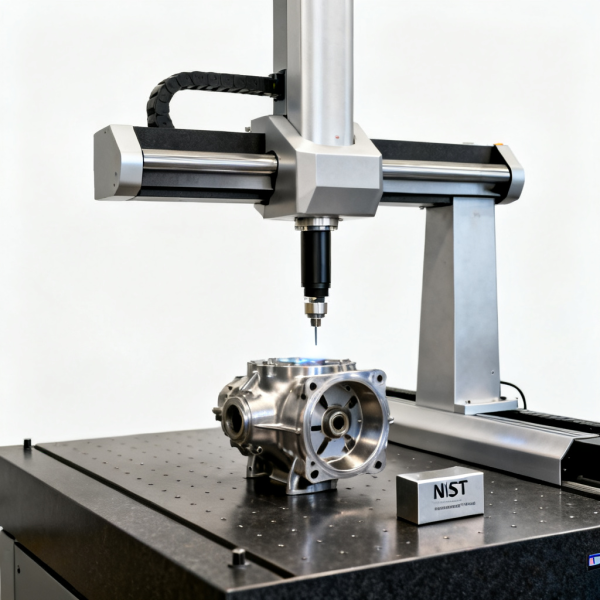

This is especially relevant in industries where non-contact vision inspection systems, coordinate measuring machines, industrial optics, or hyperspectral imaging are already part of the production ecosystem. Once reflective surface effects corrupt upstream geometry capture, downstream analysis can also be compromised.

Which readers should worry most about reflective scan failure

Different stakeholders face different risks, but reflective surface scanning affects nearly every decision layer.

Operators and application engineers are usually the first to see practical symptoms: unstable exposure, missing patches, repeated rescans, and alignment issues. Their concern is speed, usability, and whether a workflow can be stabilized without excessive manual correction.

Quality managers and inspection personnel care about repeatability, measurement system confidence, audit readiness, and whether scan data can stand beside CMM or reference-gauge results.

Technical evaluators want to know whether the scanner’s sensing principle matches the part mix, surface finish range, tolerance demands, and integration requirements.

Procurement teams and business decision-makers are concerned with a broader question: will this equipment reduce risk and improve throughput, or will hidden workflow costs erase the expected ROI?

Distributors and solution providers need to avoid overselling nominal scanner accuracy without clarifying reflective-surface limitations, pre-treatment needs, and verification obligations.

In short, reflective scanning problems are not just operator-level nuisances. They affect capital investment quality, supplier credibility, product acceptance, and production efficiency.

How to judge whether the problem is the part, the scanner, or the process

A useful evaluation framework starts with diagnosis rather than assumptions. Many teams blame the scanner too quickly, while others blame the surface and accept poor outcomes as unavoidable. In reality, you need to isolate the source of error.

Start with these questions:

- Is the part highly polished, mirrored, translucent, dark-glossy, or coated?

- Are errors concentrated in certain viewing angles or across the full part?

- Do repeat scans of the same setup produce the same deviation pattern?

- Was the scanner calibrated according to manufacturer and lab procedure?

- Are exposure, gain, and acquisition parameters optimized for this surface type?

- Is scan data being compared against a trusted reference such as a CMM, artifact, or certified standard?

- Is software filling gaps or smoothing features in ways that hide capture failure?

If the error pattern changes heavily from one scan to another, the issue is often sensing instability. If the error is systematic in specific reflective regions, surface interaction or angle-of-incidence effects are likely dominant. If the raw data is acceptable but final dimensions drift, the issue may sit in alignment, meshing, filtering, or CAD comparison logic.

This is why advanced metrology teams often validate optical scan results against a secondary reference path. The goal is not to reject optical systems, but to identify where their reliable operating window begins and ends.

What practical fixes work best on reflective surfaces

The best mitigation strategy depends on whether the application prioritizes speed, non-contact purity, dimensional traceability, or cosmetic surface preservation.

Common and effective countermeasures include:

- Temporary matting spray: one of the most effective ways to reduce specular reflection, though it may be unsuitable for sensitive or contamination-controlled parts

- Optimized scanning angle: changing the angle between projector, sensor, and surface can reduce direct glare

- Exposure and sensor tuning: correct settings can improve point capture, but tuning alone rarely solves severe mirror-like reflection

- Reference targets or photogrammetry support: improves registration stability on difficult parts

- Hybrid inspection strategy: use 3D scanning for global form and CMM or touch-probe validation for critical features

- Controlled lighting environment: reduce ambient interference and stabilize capture conditions

- Part segmentation: scan difficult zones separately and merge under controlled alignment logic

- Sensor selection by application: some laser-based or specialized optical systems perform better than general-purpose structured-light systems on reflective materials

However, no mitigation should be treated as a universal fix. For example, anti-reflective spray may improve capture dramatically, but it can also alter ultra-tight dimensions if coating thickness is not controlled. In semiconductor, aerospace, medical, or high-frequency electrical components, contamination or surface modification may not be acceptable at all.

That is why process qualification matters more than anecdotal success. A solution is only valid if the resulting data proves repeatable and sufficiently accurate for the intended tolerance band.

How buyers and technical evaluators should assess scanner claims

If you are comparing vendors or preparing a capital purchase, reflective-surface performance should be tested explicitly, not assumed from brochure accuracy numbers.

A stronger evaluation process includes:

- Testing on your actual parts: not only on vendor demo artifacts

- Including multiple finishes: matte, brushed, polished, coated, and mixed-material surfaces

- Checking repeatability: run multiple scans under the same conditions

- Comparing to a trusted reference: CMM, calibrated gauge, or certified artifact

- Reviewing workflow burden: setup time, spray requirement, operator skill dependency, and post-processing effort

- Assessing uncertainty fit: can the system support your actual tolerance stack, not just nominal geometry capture?

For enterprise decision-makers, the key question is not “Which scanner is most accurate?” but “Which measurement workflow is robust enough for our reflective part mix, compliance needs, throughput targets, and quality risk profile?”

This distinction is crucial. A scanner that performs well on diffuse surfaces but requires heavy manual intervention on reflective parts may not be the best business choice, even if its advertised accuracy is impressive.

Why standards-based verification matters more than marketing language

In industrial measurement, reflective-surface performance should be discussed in the context of verification, repeatability, and traceability. This is where standards thinking becomes valuable.

Alignment with recognized frameworks such as IEEE standards, NIST standards, and relevant calibration and laboratory practices helps organizations move from subjective scanner impressions to defensible measurement decisions. While the exact applicable standard depends on the device class and application, the governing logic is consistent:

- Define the measurement task clearly

- Verify the system under representative conditions

- Quantify repeatability and bias where possible

- Use traceable references for comparison

- Document limitations, not just best-case performance

For high-consequence sectors, this approach reduces the risk of accepting distorted scan data into engineering change, supplier approval, or final quality release workflows.

Final takeaway: reflective surfaces do not just reduce scan quality — they change the measurement risk

When 3D scanning accuracy fails on reflective surfaces, the issue is rarely a minor image-quality inconvenience. It is a measurement risk problem that can affect inspection decisions, project timelines, equipment ROI, and product quality confidence. The most effective response is not to rely on generic scanner claims, but to evaluate the full measurement chain: surface behavior, sensor technology, environmental control, operator method, software processing, and reference-based verification.

For operators, the practical lesson is to diagnose the failure mode before rescanning blindly. For quality and engineering teams, the priority is repeatable validation rather than visually convincing meshes. For procurement and enterprise leaders, the smartest investment decision is to choose a workflow that remains reliable on real reflective parts, not only in ideal demonstrations.

In precision manufacturing and industrial sensing environments, trustworthy geometry is earned through controlled method, not assumed from device specification. That is the standard reflective-surface scanning should be judged against.

Recommended News