Surface Roughness Ra/Rz Benchmarks: When the Numbers Mislead

Time

Click Count

Surface roughness Ra/Rz benchmarks are useful, but they are not self-explanatory pass/fail truths. In quality control, many costly mistakes happen when a surface is approved because the Ra value looks acceptable, or rejected because Rz appears high, without checking how the value was measured, what the part must actually do, and which standard defines the requirement. For quality and safety managers, the real issue is not whether Ra or Rz matters. It is whether the benchmark being used is functionally relevant, consistently measured, and defensible in audits, supplier disputes, and failure investigations.

The core search intent behind “surface roughness Ra/Rz benchmarks” is practical: readers want reliable guidance on how to interpret these numbers, where common benchmark tables fail, and how to avoid wrong decisions in inspection and supplier quality management. They are not looking for another basic definition list. They need a decision framework.

For this audience, the biggest concerns are clear. First, how do you know whether an Ra or Rz requirement actually protects product performance? Second, when different instruments, cut-off settings, or sampling lengths produce different values, which number should be trusted? Third, how can procurement, quality, and production teams avoid specification language that looks precise but creates ambiguity, scrap, or compliance risk?

This article focuses on those issues. It explains why surface roughness Ra/Rz benchmarks can mislead, where they are still useful, what quality teams should verify before accepting a number, and how to build more robust specifications for production control, safety-critical parts, and supplier alignment.

Why surface roughness benchmarks often fail in real quality decisions

Benchmark charts are attractive because they reduce a complex surface into an easy comparison. A team can say that ground parts are usually around a certain Ra range, turned parts around another, and polished parts lower still. That simplicity supports quick quoting, process planning, and incoming inspection. The problem starts when those reference values are treated as universal indicators of quality.

Ra and Rz are summary parameters, not full descriptions of a surface. Ra averages the absolute height deviations across the evaluation length. Rz usually captures the height difference between major peaks and valleys in a defined way, depending on the standard used. Neither parameter alone tells you whether the surface has isolated scratches, directional lay problems, torn material, waviness, plateau effects, porosity, or periodic tool marks that may strongly affect sealing, fatigue, friction, wear, coating adhesion, or cleanability.

A part can meet a low Ra target and still fail in service. A hydraulic sealing face may have acceptable average roughness but contain deep valleys that become leak paths. A bearing raceway may show a compliant Ra while retaining chatter marks that drive vibration and premature wear. A medical or food-contact component may pass a roughness limit yet still contain topographic features that complicate cleaning or contamination control.

Quality teams also see the reverse problem. A part may exceed a stated Rz limit and still perform perfectly well because the requirement was copied from a legacy print, borrowed from another industry, or set without regard to function. In those cases, the benchmark does not improve control. It creates unnecessary rework, supplier friction, and misleading nonconformance data.

The lesson is straightforward: surface roughness Ra/Rz benchmarks are only meaningful when tied to function, process capability, and a clearly defined measurement method.

What Ra and Rz actually tell you—and what they hide

Ra is widely used because it is simple and stable enough for routine production control. It works reasonably well when the main goal is to classify overall finish level and monitor process drift. For repetitive machining operations, Ra can be a useful trend metric. If the process has historically produced acceptable performance within a certain Ra band, that benchmark may support day-to-day control.

But Ra is also easy to misuse. Because it is an averaging parameter, it can smooth over local extremes. Two surfaces with the same Ra can behave very differently in contact, lubrication, optical reflection, or sealing. One may have evenly distributed fine texture; the other may have occasional deep defects masked by otherwise smooth regions.

Rz is often chosen to capture extremes better, especially where peak-to-valley behavior matters. In practice, Rz can be more sensitive to scratches, tears, or aggressive machining marks than Ra. That can make it more valuable for applications where isolated topographic defects matter. However, that same sensitivity also means Rz is more vulnerable to variation from measurement setup, filtering choices, and local sampling location.

Another source of confusion is that not all organizations use exactly the same roughness definitions in the same way. Legacy drawings, customer-specific standards, and regional conventions can create hidden inconsistencies. If a supplier measures using one standard interpretation and the customer audits using another, both may report a legitimate number yet still disagree.

For quality and safety managers, the practical takeaway is that neither Ra nor Rz should be treated as a complete quality verdict. They are indicators. Their usefulness depends on whether the chosen indicator matches the failure mode being controlled.

When the numbers mislead most: common failure scenarios in inspection and supplier control

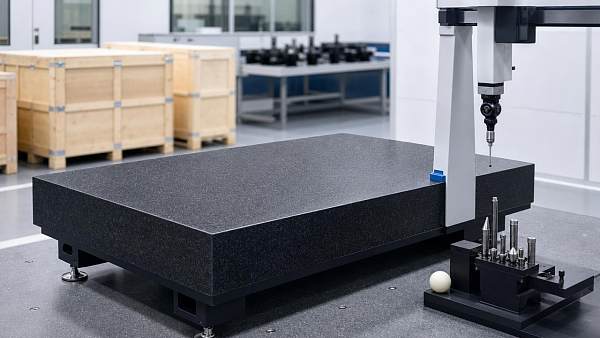

The first high-risk scenario is using benchmark tables without measurement context. Roughness values depend on stylus tip geometry, cut-off length, filter type, sampling length, evaluation length, measurement direction, and whether the surface has been cleaned properly. Change any of those variables and the reported Ra or Rz may shift enough to change a pass/fail result.

The second scenario is copying benchmark limits from process expectations rather than functional requirements. Teams often assume, for example, that “milled surfaces should be around X” or “sealing faces must be below Y.” Those assumptions may be directionally useful, but they are not substitutes for validated requirements. A process-based benchmark may be too loose for a fatigue-critical part or too tight for a cosmetic bracket.

The third scenario is using one number to control multiple surface functions. A component may need low friction, good oil retention, and strong coating adhesion at the same time. A single Ra or Rz requirement rarely captures all three. This is especially true for plateau-honed, textured, coated, additive-manufactured, or multi-step finished surfaces.

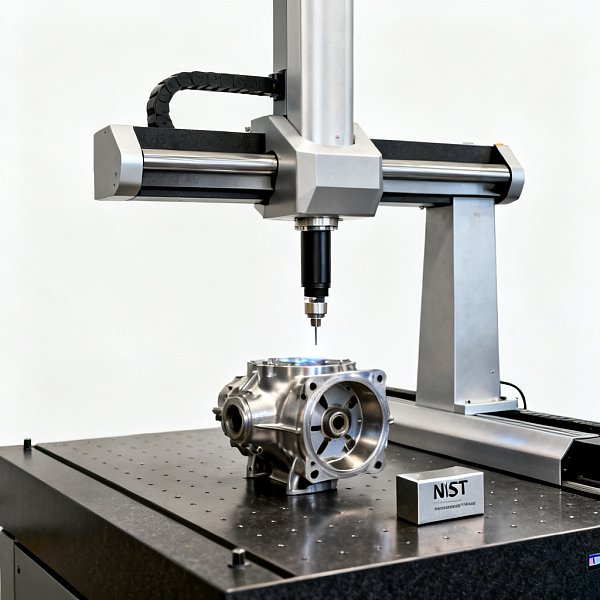

The fourth scenario involves supplier comparison. One supplier may report favorable roughness values because measurements are taken along the lay, while another measures across it. One may exclude damaged zones near edges; another may include them. One may inspect a representative central area; another may inadvertently choose a smoother location. Without a common measurement protocol, the benchmark becomes a source of commercial dispute rather than objective control.

The fifth scenario appears during root-cause analysis. After a field failure, teams often review inspection records and conclude that the roughness requirement was met, therefore the surface was not the cause. That conclusion can be false if the chosen parameter failed to capture the critical topography. A passed Ra does not eliminate the possibility of problematic peaks, valleys, waviness, lay orientation, or localized defects.

How quality managers should evaluate a roughness requirement before trusting it

A strong roughness requirement starts with one question: what failure mode is this requirement meant to prevent? If the answer is unclear, the benchmark is probably weak. A sealing surface, sliding surface, bonded surface, fatigue-sensitive edge zone, and hygienic contact surface all interact with topography differently. The metric should reflect that difference.

The next question is whether Ra, Rz, or another parameter is the right control variable. For broad process monitoring, Ra may be adequate. For defect-sensitive applications, Rz may provide better warning. In some cases, neither is enough and additional parameters, profile traces, areal measurements, waviness limits, or visual defect criteria are needed.

Third, confirm whether the drawing or quality plan fully defines the measurement method. At minimum, teams should verify the applicable standard, cut-off length, evaluation length, filtering approach, measurement direction relative to lay, number of traces, acceptance rule, and measurement location. If those items are not specified, benchmark values can appear precise while remaining operationally ambiguous.

Fourth, check whether the requirement is statistically realistic for the process. A roughness limit that is technically possible but not capable at normal production variation will generate chronic instability. That does not protect quality. It simply converts process noise into repeated nonconformances. Capability studies, gauge repeatability and reproducibility reviews, and cross-lab correlation are essential before locking in strict supplier requirements.

Fifth, ask whether the requirement supports auditability and supplier enforcement. If two competent labs can produce different results from the same part because the instruction is incomplete, the requirement is not strong enough for commercial control. Quality systems need roughness criteria that are not just technically sound, but also contractually and procedurally robust.

Practical interpretation of surface roughness Ra/Rz benchmarks by application risk

For low-risk general machined components, roughness benchmarks can be used mainly as process classification and consistency tools. In these cases, Ra is often sufficient if the surface has no critical sealing, fatigue, optical, or contamination-control function. The main goal is to detect tool wear, process drift, or supplier inconsistency early.

For medium-risk functional surfaces, such as mating parts, moderate sliding contacts, and non-safety-critical sealing zones, benchmarks should be treated as starting points, not final requirements. Here, both Ra and Rz may be useful together, especially when one metric captures overall finish and the other screens for more severe topographic excursions. Direction of lay and measurement location become more important.

For high-risk applications—such as aerospace interfaces, medical-contact surfaces, semiconductor equipment components, high-purity flow paths, or pressure-retaining sealing faces—generic benchmark tables are rarely enough. Requirements should be validated against performance, process capability, and the exact measurement standard. Additional controls may include areal surface parameters, defect microscopy, waviness limits, edge-condition criteria, or functional testing.

Safety managers should pay particular attention when roughness influences cleanability, crack initiation, friction heating, particle generation, or leak integrity. In these environments, an oversimplified Ra/Rz benchmark may create a dangerous false sense of control. A specification should reflect the actual hazard pathway, not just a familiar metrology habit.

How to write better surface specifications and avoid false approvals

If your organization wants to reduce disputes and improve decision quality, start by revising how roughness appears on drawings, control plans, and supplier quality documents. Do not specify an isolated number unless it is enough to manage the real risk. A good requirement names the parameter, the limit, the standard, and the measurement conditions needed for reproducibility.

It is also wise to separate development benchmarks from release specifications. During early process selection, reference ranges for milling, grinding, lapping, polishing, or coating preparation can help teams estimate manufacturability and cost. But those ranges should not automatically become final acceptance criteria. Final specifications should be tied to verified product function and measured process capability.

Where supplier networks are involved, establish a common measurement protocol and correlation plan. Use the same instrument class where possible, align cut-off and filtering settings, define trace direction and location, and compare results on shared reference samples. This is often more valuable than arguing over whose roughness number is “correct.”

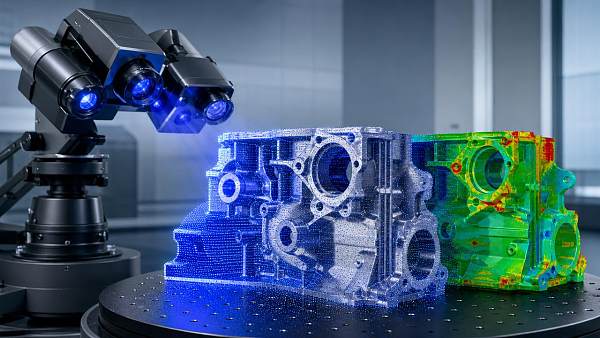

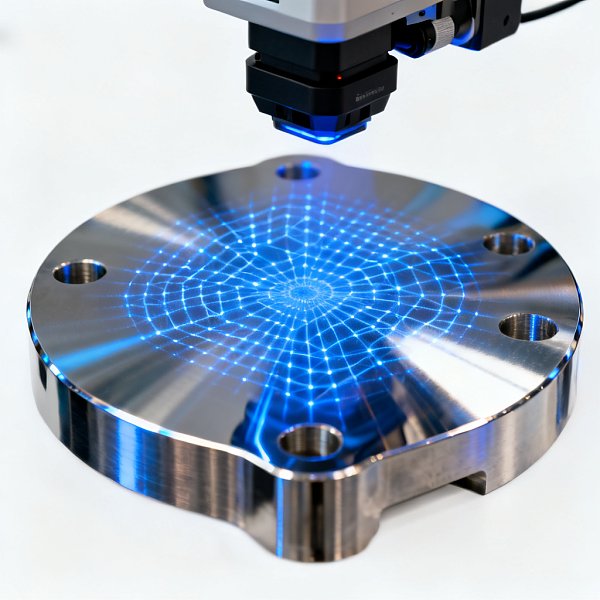

Quality leaders should also train teams to review roughness data as part of a broader surface assessment. If a profile trace shows abnormal spikes, waviness, or non-random texture, do not rely on the reported Ra alone. The trace itself may reveal risk that the summary metric hides. In many cases, a visualized profile is more informative than a single benchmark value.

Finally, build escalation logic into the inspection workflow. If roughness values are near the limit, if different labs disagree, or if the component serves a critical function, move beyond routine benchmark comparison. Require additional traces, alternate parameters, engineering review, or functional confirmation before approval. That approach prevents both false rejects and false accepts.

A decision framework for QC and safety teams

When reviewing surface roughness Ra/Rz benchmarks, quality and safety managers can use a simple sequence. First, define the function of the surface. Second, identify the failure mode to prevent. Third, confirm whether Ra, Rz, or another descriptor best reflects that risk. Fourth, standardize the measurement method in enough detail to make results reproducible. Fifth, validate that the requirement is process-capable and supplier-enforceable.

If any one of those steps is missing, the benchmark should be treated cautiously. A number without function is arbitrary. A number without method is unstable. A number without capability is operationally disruptive. A number without risk relevance is misleading even when perfectly measured.

This is why mature organizations treat roughness values as part of a decision system, not as isolated proof of quality. They understand that metrology must support action. The best benchmark is not the one that looks most precise on paper. It is the one that leads to better product performance, lower ambiguity, stronger compliance, and faster, more confident decisions across production and supply chains.

Conclusion: use Ra/Rz benchmarks as tools, not truths

Surface roughness Ra/Rz benchmarks remain valuable in manufacturing, but only when they are applied with context. For quality control and safety management, the biggest risk is not having a roughness number that is slightly wrong. It is believing that the number tells the whole story when it does not.

If you remember one principle, let it be this: roughness metrics are meaningful only when linked to function, measured consistently, and interpreted within the real operating risk of the part. When those conditions are met, Ra and Rz can be effective control tools. When they are not, the numbers can mislead teams into false approvals, unnecessary rejections, weak supplier control, and compliance exposure.

In practice, better outcomes come from asking better questions before accepting the benchmark. What does this surface need to do? Which topographic feature actually matters? How was the number produced? Can every qualified supplier and lab reproduce it? Those are the questions that turn roughness data into actionable quality intelligence.

Recommended News