Metrology Software API Interoperability: Where Integration Projects Fail

Time

Click Count

Metrology software API interoperability often looks straightforward on paper, yet many integration projects fail when data models, legacy systems, and compliance demands collide. For enterprise decision-makers, these breakdowns can delay production, weaken traceability, and inflate total cost of ownership. This article examines where interoperability initiatives go wrong and what leaders should evaluate before scaling metrology integrations across complex industrial environments.

Why is metrology software API interoperability getting so much executive attention?

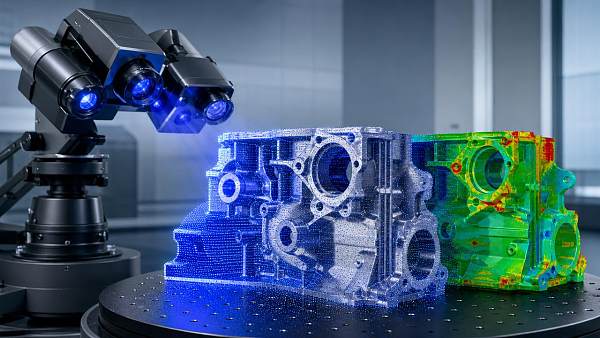

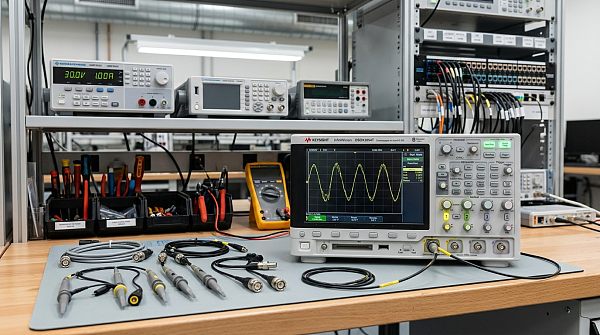

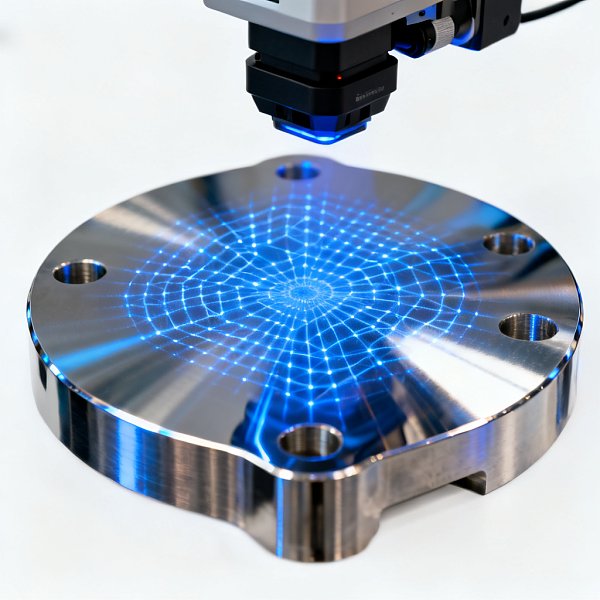

Metrology software API interoperability matters because measurement is no longer an isolated quality task. In advanced manufacturing, aerospace, electronics, automotive, medical devices, and precision engineering, inspection data must move quickly between coordinate measuring machines, optical systems, MES platforms, SPC tools, PLM environments, ERP layers, and increasingly AI analytics stacks. When these systems cannot exchange information reliably, leaders lose the visibility needed to connect measurement results with production decisions.

For many enterprises, the promise sounds simple: connect devices and software through APIs, normalize data, and automate reporting. In reality, metrology software API interoperability is often undermined by mismatched schemas, proprietary vendor logic, undocumented workflows, and weak governance around calibration, versioning, and audit trails. The result is not just technical frustration. It can mean delayed first-article inspection, manual data re-entry, poor root-cause analysis, and rising quality costs across global plants.

This is why C-level and director-level stakeholders now treat interoperability as a business resilience issue. The question is no longer whether systems can exchange data at all, but whether they can do so with enough semantic consistency, performance, security, and traceability to support enterprise-scale operations.

What does metrology software API interoperability actually mean in an industrial setting?

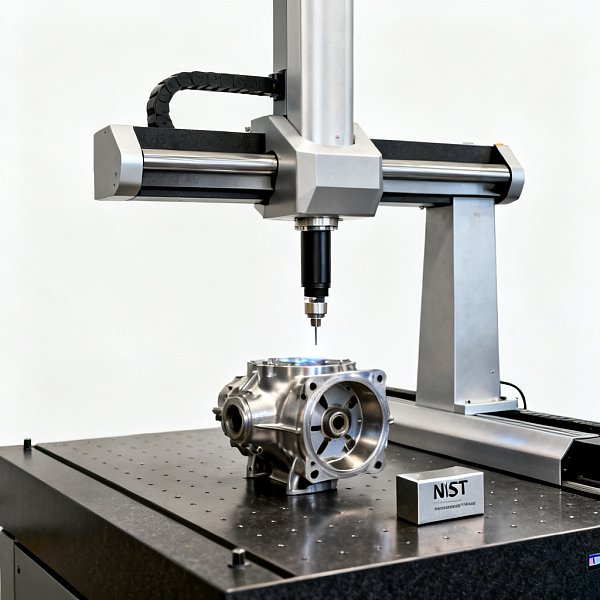

In practical terms, metrology software API interoperability means more than opening a connection between two platforms. It means that measurement plans, execution status, dimensional results, tolerances, pass/fail logic, metadata, user actions, timestamps, revision history, and equipment context can be exchanged in a way that preserves business meaning. If one system sends a result but the receiving system interprets its units, datum structure, uncertainty, or feature naming differently, integration exists technically but fails operationally.

Decision-makers should separate three layers of interoperability. The first is connectivity: can systems communicate? The second is data compatibility: can they map fields consistently? The third, and most important, is process interoperability: can the data support real workflows such as nonconformance escalation, CAPA initiation, supplier quality review, and digital thread traceability? Most failed projects overinvest in the first layer and underestimate the third.

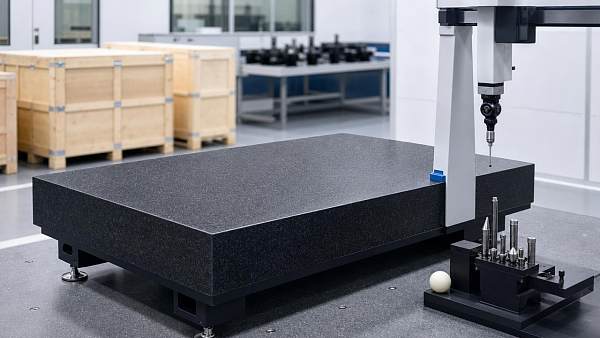

This distinction is especially important in organizations with mixed equipment generations. A legacy CMM package may export files successfully, yet still block enterprise automation because it lacks event-based APIs, modern authentication, or a stable object model for downstream use.

Where do integration projects fail most often?

The most common failure point is assuming that all measurement data is structurally similar. It is not. One platform may represent features, alignments, tolerances, and uncertainty in ways another cannot consume without custom transformation. Enterprises often discover too late that a vendor’s API exposes only summary outputs, while the business needs feature-level detail for statistical analysis and traceability.

Another frequent breakdown appears in legacy coexistence. A new quality platform may support modern REST endpoints, while installed metrology software depends on file drops, middleware scripts, or limited SDKs. Teams then create fragile custom connectors that work in a pilot but collapse during scale-up, software upgrades, or multi-site rollouts.

Governance is the third major weak point. Without clear ownership of master data, naming conventions, revision control, unit handling, and validation procedures, each plant or engineering team creates local workarounds. That leads to inconsistent reports, duplicate logic, and disputes over which dataset is authoritative. In regulated or tightly audited sectors, weak governance can also expose the business to compliance risk.

Security and change management are also underestimated. APIs that move sensitive production and inspection data must align with enterprise identity controls, vendor support models, and cybersecurity policies. If the integration depends on one specialist or one undocumented script, the organization has not achieved interoperability; it has created concentration risk.

Which early warning signs suggest an interoperability project is likely to fail?

Executives should look for signals that the initiative is being framed as a software connection exercise rather than an operational architecture program. If meetings focus only on API calls, payloads, and vendor claims, while avoiding questions about process ownership and data semantics, the project may already be heading toward rework.

A second warning sign is when success criteria are vague. “Integrate metrology with quality systems” is not a measurable outcome. Better definitions include reduced manual data handling, faster inspection release, lower reporting latency, stronger lot traceability, or fewer data reconciliation events. Without these operational targets, teams can declare technical completion even though business value remains unrealized.

The third sign is overreliance on customization. Some customization is normal, but if interoperability depends on heavy script maintenance, per-machine exceptions, or undocumented field mappings, long-term sustainability becomes weak. Enterprise leaders should ask whether the design can survive version updates, supplier changes, network segmentation, and regional rollout differences.

Common failure indicators at a glance

How should decision-makers evaluate vendors and platforms before committing?

A strong evaluation starts with use cases, not marketing claims. Ask vendors to demonstrate how their metrology software API interoperability supports a complete workflow: part program execution, result capture, tolerance interpretation, nonconformance routing, and data handoff into enterprise systems. A generic statement such as “open API available” is not enough. Leaders should request evidence of object granularity, event support, authentication methods, version policy, and backward compatibility.

It is also important to assess whether the supplier understands industrial standards and quality governance. In high-precision sectors, data integrity is inseparable from calibration discipline, timestamp accuracy, equipment identification, and auditability. The best vendors can explain how their interfaces align with ISO-driven quality processes, enterprise validation expectations, and long-term lifecycle support.

Enterprises should further evaluate ecosystem maturity. Does the vendor provide stable documentation, test sandboxes, implementation guidance, and a support team that can engage both IT and quality engineering? Can the platform coexist with optical metrology, tactile systems, vision inspection, and environmental sensing data? Interoperability should be judged as a cross-functional operating capability, not simply a feature checklist.

Key evaluation questions for enterprise buyers

Before procurement or expansion, ask these questions internally and to suppliers:

- Which measurement data elements must remain intact across systems, including units, uncertainty, revision, and device identity?

- Does the API support real-time events, batch exchange, or both, and which mode aligns with production risk?

- How are software upgrades managed without breaking existing integrations?

- What is the fallback process if a connector, endpoint, or middleware layer fails?

- Who owns data mapping rules and validation at the enterprise level?

- Can the architecture scale across regions, suppliers, and different metrology brands without rebuilding core logic?

What are the biggest misconceptions about metrology software API interoperability?

One misconception is that standard APIs automatically create standard outcomes. In reality, two systems may both expose APIs and still interpret the same characteristic differently. Another misconception is that middleware alone will solve the problem. Middleware can help orchestrate flows, but it cannot compensate for poor source data quality, undefined ownership, or incompatible business logic.

A third misconception is that interoperability is mainly an IT project. It is not. Quality, manufacturing engineering, validation, cybersecurity, procurement, and operations must all participate. If metrology specialists are absent, subtle but critical issues such as alignment definitions, feature naming, or uncertainty treatment may be missed. If IT governance is absent, the result may be technically clever but impossible to support securely at scale.

Another common error is underestimating the cost of semantic mapping. Budget owners often approve interface development but not the deeper work of harmonizing data dictionaries, process states, and exception handling. That hidden effort is often where projects either mature into reliable infrastructure or stall into permanent pilot mode.

How can enterprises reduce risk before scaling integration across multiple sites?

The safest path is staged standardization. Start by defining a canonical measurement data model for the enterprise, including critical attributes, naming rules, result status logic, and traceability requirements. Then validate one or two high-value workflows rather than trying to connect every machine and software package at once. This approach exposes semantic and governance issues early, when correction cost is still manageable.

It is equally important to establish a formal validation framework. For metrology software API interoperability, testing should cover not only connectivity but also payload accuracy, timing behavior, user permissions, data loss scenarios, and upgrade resilience. Enterprises operating under strict customer or regulatory oversight should document these tests as part of quality assurance and supplier qualification.

Leaders should also insist on lifecycle planning. Who maintains mappings? How are endpoint changes approved? What service levels apply when a measurement data flow fails during production? How will the architecture absorb future technologies such as AI-driven inspection analytics, additional sensor classes, or new plants from acquisition activity? Interoperability should be designed as a durable capability aligned with the digital thread, not a one-time integration milestone.

What should executives confirm before moving into procurement, rollout, or partnership discussions?

Before approving next steps, enterprise decision-makers should confirm six fundamentals: the business workflow to be improved, the exact data objects required, the installed-base constraints, the security model, the validation approach, and the ownership model for long-term support. If any of these remain unclear, the organization risks buying tools that increase integration complexity rather than reducing it.

For organizations guided by technical benchmarking and precision-focused quality strategies, metrology software API interoperability should be assessed with the same rigor applied to measurement accuracy itself. The integration is part of the measurement system. If it distorts context, weakens traceability, or fragments decision logic, the enterprise does not gain digital maturity; it simply moves uncertainty downstream.

If you need to confirm a concrete direction, implementation cycle, budget scope, or cooperation model, begin the conversation with practical questions: which systems must exchange data first, which compliance or customer requirements are non-negotiable, what level of granularity analytics teams need, how upgrades will be governed, and which KPI improvements justify the investment. Those answers usually reveal whether a metrology integration initiative is truly ready to scale.

Recommended News