Data Intelligence Protocols for Traceable Inspection Data

Time

Click Count

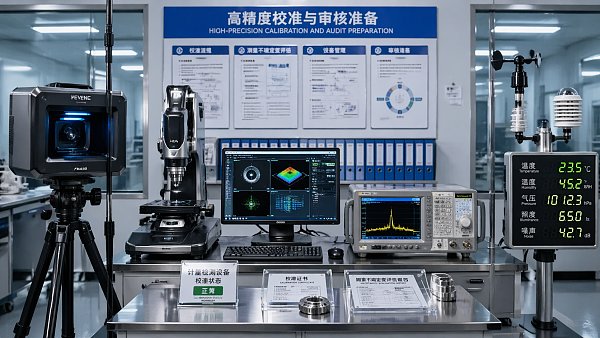

Data Intelligence Protocols are becoming the backbone of traceable inspection data in precision-driven industries. For enterprise decision-makers, they enable a reliable bridge between measurement systems, quality assurance, and compliance requirements—turning fragmented inspection outputs into auditable, actionable intelligence. This article explores how structured protocols strengthen traceability, reduce risk, and support smarter operational decisions across complex manufacturing environments.

For CTOs, Quality Directors, and procurement leaders, the issue is no longer whether inspection data exists. The real challenge is whether data from CMMs, optical sensors, vision systems, electrical test platforms, and environmental monitors can be trusted, linked, and reused across the full production lifecycle.

In high-value sectors such as semiconductor packaging, aerospace machining, electronics assembly, and advanced industrial manufacturing, a single missing timestamp, an unverified calibration status, or a broken device-to-batch record can delay shipment, trigger rework, or weaken compliance readiness within 24 to 72 hours.

That is why Data Intelligence Protocols matter. They define how inspection results are structured, validated, transmitted, stored, and interpreted so that measurement activity becomes business-grade evidence rather than isolated machine output.

Why Traceable Inspection Data Has Become a Board-Level Concern

Traceability was once treated as a quality department requirement. Today, it affects margin protection, supplier governance, regulatory defense, and customer retention. In complex manufacturing chains with 3 to 5 inspection stages per part, undocumented measurement lineage creates operational blind spots that executives cannot afford.

From measurement events to decision-ready records

A traceable inspection record should include at least 6 core elements: part or batch identifier, equipment identifier, calibration status, operator or automated execution source, timestamp, and result context. Without these fields, pass/fail outcomes are difficult to audit and even harder to compare over time.

For example, a non-contact vision system may detect dimensional drift of ±12 µm, but if the lighting profile, recipe version, and product revision are not tied to the result, corrective action may target the wrong cause. The cost is not just technical confusion; it is delayed containment and inefficient root-cause analysis.

The business risks of fragmented inspection architecture

In many enterprises, inspection data still sits across 4 separate layers: instrument software, local workstation exports, MES or QMS uploads, and archived reports. When these layers are disconnected, version conflicts, manual transcription errors, and missing metadata become common.

- Manual file consolidation can add 15 to 30 minutes per production lot.

- Unmapped naming conventions often create duplicate records across departments.

- Calibration evidence may be stored outside the inspection result itself.

- Cross-site benchmarking becomes unreliable when units, tolerances, or sampling frequencies differ.

These issues matter at executive level because they directly influence scrap exposure, warranty investigation speed, and readiness for customer or third-party audits aligned with ISO/IEC 17025, IEEE methods, or NIST-traceable practices.

Typical failures that Data Intelligence Protocols are designed to prevent

The table below outlines common weaknesses seen in multi-instrument inspection environments and how structured protocol design reduces them.

The key lesson is simple: traceability is not created by storing more files. It is created by controlling context. Data Intelligence Protocols bring that context into a repeatable, machine-readable structure that supports both local quality action and enterprise-level governance.

What Data Intelligence Protocols Should Include in Industrial Inspection Environments

Not every protocol stack needs the same depth, but high-value inspection environments typically require 5 functional layers. These layers turn raw data into traceable intelligence that can move across metrology, operations, engineering, supplier quality, and compliance teams.

1. Data capture standardization

The first layer defines what is captured from each instrument. In mixed fleets that include CMMs, 3D scanners, optical profilers, electrical analyzers, and gas or particle sensors, field normalization is essential. Units, tolerance notation, pass criteria, and timestamp resolution should remain consistent across all devices.

Minimum recommended capture fields

- Part ID, lot ID, and revision level

- Instrument type and unique equipment identifier

- Method version or inspection recipe number

- Measured values, tolerance limits, and decision status

- Execution time, operator source, and calibration reference

- Ambient condition data where relevant, such as 20°C ±2°C or 40% to 60% RH

This may appear basic, yet many organizations still allow at least 2 or 3 different export formats for the same process family. That inconsistency undermines automated analysis and slows enterprise reporting.

2. Validation and integrity controls

Once data is captured, protocols must verify whether the result is trustworthy. Validation rules should check field completeness, calibration validity, method conformity, and tolerance logic before records enter the quality database or downstream analytics environment.

A practical rule is to block or quarantine records if 1 of 4 conditions exists: expired calibration, missing part revision, invalid unit mapping, or unsynchronized timestamp. This simple gate can prevent entire batches of unusable records from entering trend analysis.

3. Traceability linkage across systems

A protocol is only valuable if records can move across enterprise systems without losing meaning. Integration points usually include MES, QMS, ERP, PLM, and laboratory or metrology software. The goal is not full platform replacement but controlled interoperability.

For many manufacturers, the highest return comes from linking 3 relationships: device-to-result, result-to-batch, and batch-to-corrective action. Those connections allow a defect found in final audit to be traced back to the exact station, recipe, and environmental condition that produced it.

4. Retention, auditability, and access governance

Traceable inspection data must remain accessible for the required retention period. Depending on industry and contract terms, this may range from 2 years to more than 10 years. Protocols should define who can view, edit, approve, export, or archive records.

Strong governance does not mean excessive complexity. It means maintaining clear permissions, version histories, and immutable audit logs for all critical quality records. This is especially important when supplier data, outsourced inspection, or cross-border manufacturing sites are involved.

5. Analytical readiness for continuous improvement

The final layer ensures data is usable for trend analysis, process capability review, predictive maintenance, and procurement benchmarking. If inspection outputs cannot be aggregated by machine family, supplier, shift, or material lot, they have limited strategic value.

Data Intelligence Protocols should therefore support structured metadata, not just result storage. This enables practical use cases such as 30-day drift monitoring, first-pass yield correlation, or comparison between two sensor technologies under the same tolerance window.

How Enterprise Buyers Should Evaluate Protocol-Driven Inspection Solutions

For decision-makers evaluating new metrology, sensing, or inspection platforms, protocol maturity should be treated as a purchasing criterion, not an afterthought. Hardware performance matters, but data architecture determines whether that performance becomes scalable business value.

Four questions procurement teams should ask suppliers

- Can the system export structured records with complete metadata rather than flat PDF-only reports?

- Does it support integration with at least 2 or 3 enterprise systems already used in the plant?

- How are calibration links, audit trails, and access controls maintained over time?

- What is the implementation effort in weeks, interfaces, and validation steps?

These questions help separate high-performance instruments from high-value solutions. A device with excellent resolution but poor data interoperability may raise long-term operational cost more than a slightly less advanced unit with stronger protocol design.

Evaluation criteria that influence long-term ROI

The table below summarizes common decision factors used by enterprises when comparing protocol-enabled inspection platforms or data-layer upgrades.

If a supplier cannot explain these areas in operational terms, the organization may end up buying isolated capability rather than a traceable data solution. For global plants, that difference becomes visible within the first 6 to 12 months of scaling.

Common buying mistakes

Prioritizing device speed over data usability

Fast capture rates are valuable, but not if results require manual cleansing before review. In many environments, 5 extra seconds of structured metadata capture saves hours of reconciliation every month.

Assuming compliance can be added later

Retrofitting audit trails and traceability links after deployment is usually more expensive than specifying them at purchase stage. The effort often involves software changes, process retraining, and record migration.

Underestimating cross-functional ownership

Inspection data affects quality, manufacturing, engineering, IT, and supplier management. If only one department owns the protocol decision, integration gaps are likely to remain hidden until production scale increases.

Implementation Roadmap for Protocol-Based Traceability

A practical deployment does not require a full digital overhaul. Most enterprises can improve traceable inspection data through a phased model that starts with high-risk nodes and expands to adjacent processes once governance is stable.

Phase 1: Map current inspection flows

Start by documenting where data is generated, transformed, approved, and stored. In a 2 to 3 week discovery stage, many teams identify at least 5 hidden dependencies, such as local spreadsheets, operator naming habits, or disconnected environmental logs.

Phase 2: Define a minimum protocol schema

Do not attempt to standardize every field at once. Focus first on records that influence release decisions, customer complaints, or critical process capability. A minimum schema often includes 10 to 15 mandatory fields and 5 optional contextual fields.

Phase 3: Pilot on one instrument family or line

A pilot lasting 4 to 8 weeks is usually sufficient to test timestamp quality, calibration mapping, and batch linkage. Good pilot candidates include final dimensional inspection, in-line optical verification, or electrical test where traceability gaps are easy to quantify.

Phase 4: Integrate with enterprise systems

After pilot validation, extend the protocol to QMS, MES, or data lake environments. Define exception handling rules, approval workflows, and retention settings before broad rollout. This reduces future conflict between operational speed and governance control.

Phase 5: Review performance every 30 to 90 days

Protocol performance should be reviewed like any other operational system. Useful indicators include record completeness rate, traceability gap count, time to root-cause closure, and percentage of inspections linked to valid calibration status.

Where G-IMS Adds Strategic Value

For enterprise leaders navigating advanced metrology, optical sensing, electrical measurement, machine vision, and environmental monitoring, the technical question is rarely limited to hardware selection. The more strategic question is how to benchmark systems against the data intelligence requirements needed for a zero-defect direction of travel.

G-IMS supports this decision process by connecting performance benchmarking with protocol-level evaluation. That matters when comparing equipment across multiple plants, qualifying suppliers, or building a business case for inspection modernization under strict quality and compliance expectations.

In environments where sub-micron measurement, high-frequency electrical verification, or contamination-sensitive monitoring drives product acceptance, Data Intelligence Protocols are not just digital infrastructure. They are a control layer that protects the credibility of every measurement-driven decision.

Final Considerations for Decision-Makers

Traceable inspection data is built through discipline in structure, validation, linkage, and governance. Enterprises that treat Data Intelligence Protocols as a core capability gain faster audit readiness, more reliable root-cause analysis, and stronger alignment between instrumentation investment and business outcomes.

For organizations evaluating new inspection architectures or upgrading legacy measurement workflows, the most effective next step is to assess where data context is currently lost and which protocol controls can deliver measurable impact within the next 1 to 2 quarters.

If your team is planning a metrology, vision inspection, electrical test, or sensor-data traceability initiative, now is the right time to obtain a tailored evaluation framework. Contact us to discuss your requirements, compare solution paths, and get a customized approach for traceable inspection data across complex industrial environments.

Recommended News