NIST Standards for Calibration and Audit Readiness

Time

Click Count

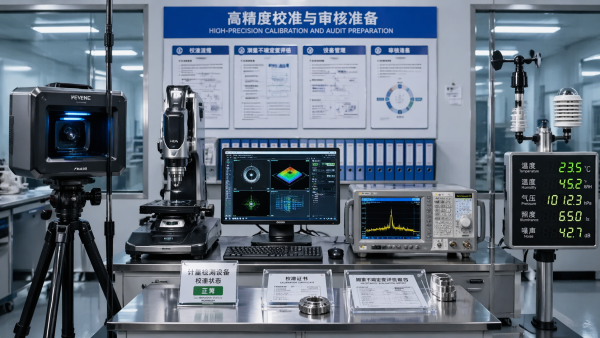

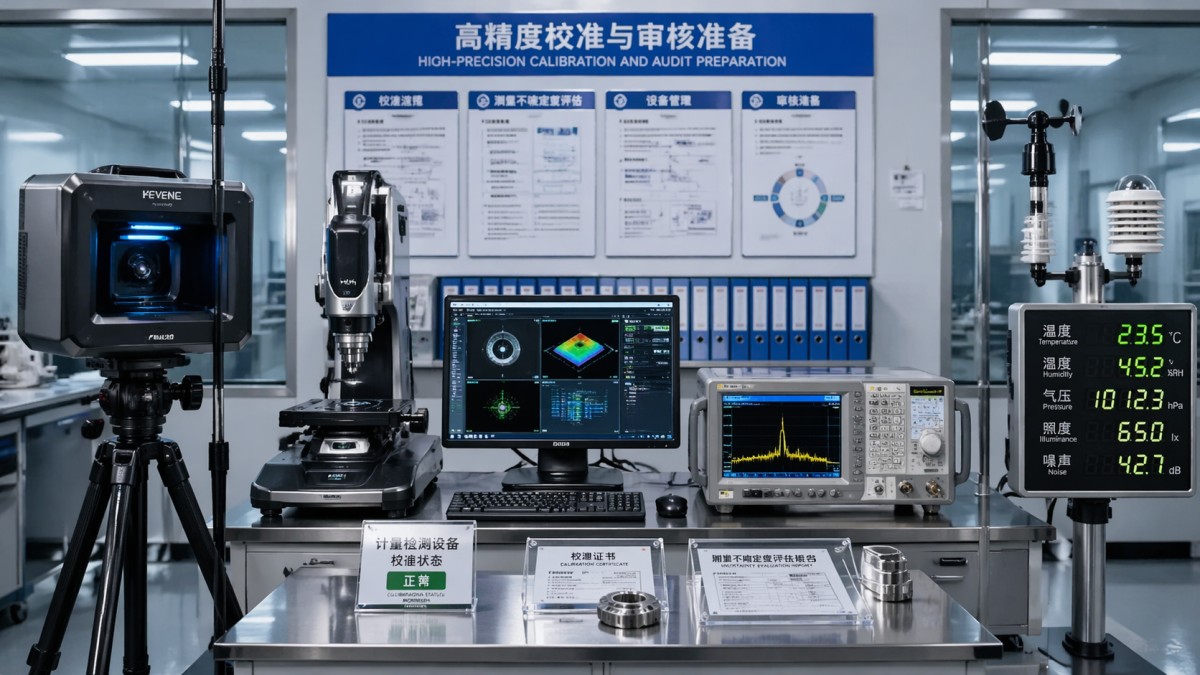

Audit-ready calibration starts with aligning advanced metrology solutions and Electrical Test equipment to NIST Standards for calibration. For teams using 3D Scanning for quality control, automated vision inspection systems, Environmental Monitoring sensors, and Spectrum Analyzers for RF testing, this guide explains how traceability, documentation, and performance verification strengthen compliance, reduce risk, and support confident operational decisions.

Why NIST traceability matters before an audit begins

In multi-industry operations, audit pressure rarely comes from one instrument alone. It usually comes from the chain of evidence behind measurements. When a plant relies on CMMs, 3D scanning systems, spectrum analyzers, optical sensors, and environmental monitoring devices, the main question is not whether the tools work today. The real question is whether every reported value can be traced back through a documented calibration path to recognized reference standards such as NIST Standards for calibration.

For information researchers and equipment operators, NIST traceability reduces uncertainty in two practical ways. First, it supports comparability across sites, suppliers, and production lines. Second, it creates a defensible record for internal quality reviews, customer audits, and supplier assessments. In many facilities, calibration intervals are commonly set at 6 months or 12 months, but the interval alone does not prove readiness. The completeness of records, environmental controls, and measurement uncertainty review are what auditors check next.

This is where G-IMS adds value. Its benchmarking approach connects hardware performance with the documentation logic needed for regulated manufacturing and advanced R&D. That matters in environments where sub-micron dimensional control, RF signal accuracy, or long-duration environmental logging can influence yield, compliance exposure, and procurement approval. Instead of looking at instruments as isolated assets, G-IMS evaluates them against standards-based decision criteria that quality teams can actually use.

Teams that prepare early usually face fewer audit disruptions. A practical readiness window is often 2–4 weeks before a formal audit or customer visit. During that period, operators should confirm calibration certificates, review out-of-tolerance history, verify software revision records, and ensure that reference artifacts or test accessories are controlled. If even 1 of these elements is missing, audit findings can expand from a single device to the wider quality system.

What auditors typically expect to see

A strong calibration file is not only a certificate PDF. It is a package of evidence showing that the instrument, method, environment, and user controls are all aligned. In cross-functional reviews, procurement may focus on vendor credentials, operators may focus on usability, and quality managers may focus on compliance language. Audit readiness requires these views to meet in one consistent record set.

- A calibration certificate with traceability to recognized reference standards, date of service, measurement results, and stated uncertainty where applicable.

- Instrument identification controls, including serial number, asset tag, location, software or firmware revision, and current calibration status label.

- Environmental and use records showing whether the device was operated within typical limits such as 20°C–23°C for dimensional labs or specified RF warm-up periods.

- Corrective action history for out-of-tolerance events, plus evidence of impact assessment on prior measurements or released product.

Which instruments create the highest calibration and audit risk?

Not all assets carry the same audit weight. In general, the highest-risk devices are those tied directly to release decisions, regulatory reporting, supplier acceptance, or tight tolerance manufacturing. A handheld environmental meter used for screening may require simpler controls than a high-frequency measurement platform used in qualification testing. The challenge for many organizations is that calibration budgets are often allocated by asset count, while audit risk is driven by application criticality.

For operators, risk increases when instruments combine complex software, multiple accessories, and sensitive environmental dependence. A vision inspection system may pass a quick functional check yet still drift in lighting geometry, lens calibration, or stage repeatability. A spectrum analyzer may power on normally while showing degraded amplitude accuracy if connectors, cables, or reference routines are not controlled. In each case, NIST Standards for calibration help establish a consistent baseline for verification.

The table below summarizes common instrument categories in multi-industry settings and highlights what procurement and operations teams should review before audit season or major customer qualification activity. It is designed for practical screening, not as a substitute for formal metrology planning.

The key takeaway is that audit risk should be ranked by measurement consequence, not only by instrument price. A mid-range sensor tied to release criteria can create more compliance exposure than a premium analyzer used only for engineering exploration. This is why G-IMS benchmarking across five industrial pillars is useful: it helps teams compare calibration needs in context, across metrology, photonic sensing, electrical test, machine vision, and environmental monitoring.

A practical 4-step risk screening method

When time is limited, teams can use a simple four-step screen to decide which assets need immediate action. This is especially useful in sites with 50, 100, or more controlled instruments and a small quality staff.

- Identify whether the instrument supports release, compliance reporting, customer qualification, or failure analysis.

- Check whether the latest calibration is traceable, complete, and aligned with the current configuration and accessories.

- Review environmental sensitivity, operator dependency, and any recent movement, repair, or firmware update in the last 30–90 days.

- Prioritize assets with high product impact and weak documentation for corrective action before the audit window opens.

How to choose a calibration approach that supports procurement and operations

Choosing a calibration strategy is often treated as a service transaction, but for B2B buyers it is really a lifecycle decision. The wrong approach can increase downtime, duplicate shipping risk, or create gaps between certificate language and actual use conditions. A better approach connects procurement criteria, operating reality, and audit documentation from the start. This matters most when teams are buying new equipment, consolidating vendors, or standardizing methods across multiple production locations.

In practice, buyers usually compare three models: manufacturer calibration, accredited third-party calibration, and mixed-model programs that combine external service with internal verification. No single model fits every asset. High-frequency measurement equipment may require service capabilities tied to specific frequency ranges and accessories. 3D scanning systems may need artifact-based checks after installation or relocation. Environmental sensors may be better managed through a combination of rotating spares and scheduled field verification.

The table below helps map procurement choices to operational impact. It is especially useful when a purchasing team needs to compare lead time, technical coverage, and documentation quality without overfocusing on price alone. In many sectors, typical external turnaround is 7–15 business days, while onsite or priority service may reduce downtime but increase service cost and coordination effort.

For procurement teams, the decision should include at least 5 checkpoints: traceability scope, stated uncertainty, turnaround time, accessory coverage, and impact on production scheduling. For operators, another 3 checkpoints matter just as much: setup repeatability, daily functional checks, and clarity of pass-fail limits. G-IMS supports this cross-functional view by linking instrument performance benchmarking to decision-ready calibration and compliance criteria rather than treating service as an isolated afterthought.

Questions buyers should ask before issuing a PO

Scope and documentation

Ask whether the calibration covers the instrument in the exact configuration used on site, including probes, antennas, cables, lenses, fixtures, software options, and environmental compensation settings. A certificate that omits critical accessories may satisfy a basic filing requirement while failing an actual audit review.

Downtime and logistics

Confirm the standard lead time, rush options, shipping conditions, and what happens if an instrument is found out of tolerance. In operations with continuous production, even 5–7 lost days can be significant. Rotating spare instruments or staged service windows may be necessary for critical assets.

Field conditions and usage profile

Ask how the provider accounts for site reality. A lab-calibrated sensor used in a harsh environment may require shorter intervals or additional field verification. The best decision is not always the cheapest certificate. It is the one that aligns with risk, usage frequency, and audit exposure.

Implementation: documentation, verification, and control points that prevent findings

Audit readiness depends less on one-time calibration and more on control discipline between calibration events. Many findings occur because records are incomplete, labels are outdated, accessories are swapped without documentation, or operators do not know which daily checks are mandatory. A sound implementation plan should define who owns the instrument, who verifies it, how records are stored, and what action is required after repair, relocation, or failed checks.

In a practical program, there are usually 3 layers of control. The first is formal calibration performed at a defined interval. The second is routine verification, often daily, weekly, or before critical use. The third is event-based review after any major change. This layered approach is especially important for dimensional metrology and RF test, where drift, setup differences, and environmental conditions can affect confidence long before the next annual service date.

G-IMS is well positioned here because its technical benchmarking spans advanced metrology, electrical test, industrial optics, non-contact inspection, and environmental sensing. That breadth matters when one quality system must govern multiple measurement technologies. Instead of using separate logic for each department, teams can build a harmonized control framework that still respects technology-specific requirements.

A 6-point control checklist for audit-ready calibration

- Maintain a current calibration register with asset ID, location, owner, due date, service provider, and status of all controlled accessories.

- Define pre-use verification steps, such as artifact checks for 3D measurement, reference target checks for vision systems, and warm-up plus self-test routines for RF instruments.

- Document environmental conditions where relevant, including temperature, humidity, vibration exposure, and clean power or grounding conditions.

- Create a clear response path for out-of-tolerance findings, including product impact review, segregation of affected data, and recalibration or repair decisions.

- Train operators on what a valid status label means, which checks are mandatory, and when use must stop pending quality review.

- Retain records for a defined period based on internal policy, customer requirements, and sector expectations, commonly multiple years for critical systems.

Common implementation mistakes

One common mistake is treating the instrument body as the only controlled item. In reality, probes, cables, fixtures, environmental enclosures, and software versions may all influence the result. Another frequent issue is failing to reassess calibration after relocation. Even moving a system between rooms can matter if the application depends on thermal stability, vibration isolation, or optical geometry.

A second mistake is using a fixed interval without reviewing actual usage intensity. A device running one shift per week may not need the same risk approach as one operating 24/7, but high-criticality use may still justify tighter checks. Interval decisions should be based on history, environment, stability, and consequence of error, not on habit alone.

A third mistake is letting certificate files sit outside the workflow. If operators cannot quickly confirm the current status, or if procurement cannot verify service scope during renewals, the quality system becomes reactive. Audit-ready calibration means the record is accessible, understandable, and linked to operational control.

FAQ: what information researchers and operators ask most often

How often should equipment be calibrated to NIST Standards for calibration?

There is no universal interval. Many organizations use 6-month or 12-month cycles as a starting point, but the correct interval depends on stability, usage frequency, environmental exposure, and the consequence of measurement error. Critical instruments may also require interim verification every day, every shift, or before key qualification runs. The interval should be justified by risk and performance history, not copied from another asset category.

Is NIST traceability the same as accreditation?

No. Traceability refers to an unbroken chain of comparisons back to recognized standards. Accreditation refers to a laboratory being assessed against a defined competence framework, often relevant to ISO/IEC 17025 environments. In procurement and audit reviews, teams should understand both. A traceable certificate may still need scope review, and an accredited service must still match the instrument configuration and measurement range actually used.

What should be checked after an instrument is repaired or moved?

At minimum, review whether the repair affected measurement performance, whether a post-service calibration or verification is required, and whether accessories or software changed. After relocation, confirm leveling, environmental suitability, reference checks, and any required alignment. For sensitive systems such as optical inspection platforms or dimensional metrology stations, event-based verification is often necessary even if the formal calibration due date is still months away.

What are the biggest red flags during an audit?

Typical red flags include missing certificates, mismatched serial numbers, overdue status labels, undocumented accessory changes, unclear uncertainty statements, and no impact assessment after out-of-tolerance results. Another red flag is when operators do not know the difference between calibration, verification, and simple functional checks. That gap often signals a weak control culture rather than a single documentation problem.

Why work with a benchmarking partner that understands both instruments and compliance

For many companies, the hardest part is not finding a calibration vendor. It is making defensible decisions across mixed technologies, different sites, and competing operational priorities. G-IMS is built for that decision layer. Its multidisciplinary structure covers Advanced Metrology & 3D Scanning, Industrial Optics & Photonic Sensors, Electrical Test & High-Frequency Measurement, Non-Contact Vision Inspection Systems, and Environmental Monitoring & Specialized Sensors. That means buyers and users can evaluate calibration readiness with both technical depth and cross-functional consistency.

If you are reviewing NIST Standards for calibration for a new purchase, preparing for an external audit in the next 2–4 weeks, or trying to standardize calibration logic across different instrument classes, a benchmarking-led approach can save time and reduce avoidable gaps. It also helps procurement avoid narrow decisions based only on service price or brand familiarity.

You can consult G-IMS on specific topics such as parameter confirmation, calibration scope review, product selection for metrology or RF test, delivery cycle planning, compliance requirement mapping, sample or application feasibility, and quotation comparison for multi-vendor environments. This is especially useful when one project combines dimensional measurement, visual inspection, and environmental sensing in the same quality workflow.

If your team needs support, prepare 4 items before reaching out: the instrument list, current calibration status, key application tolerances, and expected audit or delivery timeline. With that information, discussions can move quickly from general questions to an actionable plan covering service model, control points, documentation needs, and budget-aware implementation options.

- Advanced Metrology

- 3D Scanning

- Industrial Optics

- Photonic Sensors

- Electrical Test

- Vision Inspection Systems

- Environmental Monitoring

- Specialized Sensors

- Spectrum Analyzers

- NIST Standards

- Electrical Test equipment

- Environmental Monitoring sensors

- NIST Standards for calibration

- 3D Scanning for quality control

- Spectrum Analyzers for RF testing

- automated vision inspection systems

- advanced metrology solutions

Recommended News