Environmental Monitoring Gaps That Trigger Compliance Trouble

Time

Click Count

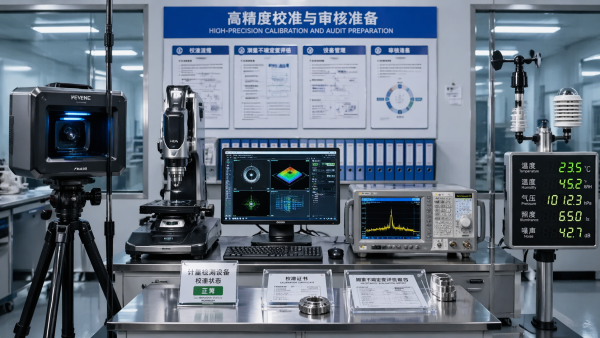

Environmental Monitoring failures rarely happen in isolation—they often reveal weak links across Sensory Technology, Industrial Sensors, and compliance workflows. For teams managing precision production, from 3D Scanning and Coordinate Measuring Machines to Electrical Test, Spectrum Analyzers, and Hyperspectral Imaging, even small monitoring gaps can trigger costly regulatory trouble. This article explains where risks emerge, how IEEE Standards and NIST Standards shape expectations, and what decision-makers can do to stay audit-ready.

In practice, compliance trouble often starts with routine assumptions: a sensor is “close enough,” a calibration interval is extended from 6 months to 12 months, an alarm threshold is copied from another site, or environmental data is stored but not reviewed. Across regulated manufacturing, laboratories, electronics, aerospace, energy, pharmaceuticals, and advanced R&D environments, those small gaps can distort test results, compromise traceability, and weaken audit defensibility.

For technical evaluators, procurement teams, quality leaders, operators, and project managers, the challenge is not simply buying more sensors. It is building a monitoring architecture that connects measurement accuracy, environmental stability, data integrity, and response procedures. That is where a benchmarking-driven view, such as the one promoted by G-IMS, becomes useful: it helps organizations assess not just hardware specifications, but the operational logic behind reliable compliance.

Where Environmental Monitoring Gaps Usually Begin

The first compliance gap usually appears at the boundary between process equipment and the surrounding environment. Many organizations tightly control product tolerances to microns, millivolts, or parts per million, yet monitor ambient temperature, humidity, vibration, particles, and airborne contaminants with broad limits or inconsistent intervals. In metrology rooms, electrical test labs, clean production cells, and optical inspection areas, that mismatch creates silent risk.

A coordinate measuring machine may be validated at 20°C, but if room temperature drifts between 18°C and 24°C during a shift, dimensional repeatability can degrade. A spectrum analysis bench may meet performance expectations in controlled conditions, yet unstable grounding, EMI exposure, or humidity swings may alter results. Hyperspectral imaging systems and photonic sensors are also sensitive to environmental light, heat buildup, and particulate interference that are often tracked inconsistently.

Another common issue is fragmented ownership. EHS teams may manage air quality, facilities teams may monitor HVAC, quality teams may review calibration, and production teams may react to alarms. If no single workflow links these functions, the organization may collect 24/7 data but still fail to demonstrate control during an audit. Regulators and customers often look for evidence of action, not just evidence of measurement.

In many facilities, trouble emerges from 4 recurring weaknesses: sensor placement, threshold design, calibration discipline, and data review frequency. Even a high-grade sensor can underperform if mounted near a vent, shielded by equipment, or left unverified for 9–18 months. Likewise, alarm logic based on a single upper limit can miss drift patterns that develop over 2–6 weeks before a formal out-of-spec event occurs.

Typical weak points in cross-industry environments

- Temperature control zones are defined too broadly, such as one sensor covering 80–120 square meters despite multiple heat sources.

- Humidity is logged every 30 minutes, while sensitive inspection or test processes change conditions within 5–10 minutes.

- Particle, VOC, or trace-gas monitoring is installed at room level, not at the actual process height where exposure matters.

- Alarm escalation stops at notification and does not trigger containment, retest, batch hold, or maintenance action.

The table below summarizes how small monitoring gaps translate into larger compliance exposure across common industrial and laboratory settings.

The key lesson is simple: compliance trouble is rarely caused by a missing sensor alone. It is usually caused by a broken chain between environmental condition, measurement confidence, documented action, and decision accountability.

How Standards Shape Monitoring Expectations

Organizations often ask whether they need to follow a single environmental monitoring rulebook. In reality, expectations are built from several layers: product regulations, customer requirements, facility controls, laboratory practices, and measurement traceability frameworks. IEEE and NIST references matter because they reinforce a disciplined approach to measurement reliability, uncertainty awareness, calibration, and defensible data handling.

For example, when a lab or factory claims a test result is valid within a certain tolerance, the surrounding environmental conditions become part of that claim. NIST-aligned traceability principles support the idea that a result must be linked to recognized references through documented calibration and uncertainty logic. IEEE-related practices are especially influential in electrical, electronics, RF, and instrumentation environments where drift, signal integrity, interference, and repeatability directly affect conformance decisions.

ISO/IEC 17025 is also highly relevant wherever testing and calibration evidence supports release, certification, supplier approval, or dispute resolution. It does not prescribe one fixed temperature or humidity level for every industry, but it does require organizations to control conditions that influence validity. That means a company must define what matters, prove it is monitored, and show how deviations are handled within a documented system.

A practical implication for procurement and quality teams is that monitoring specifications should never be written only as sensor accuracy. A complete compliance-oriented specification should include at least 5 elements: measurement range, stated accuracy, calibration interval, data logging frequency, and alarm workflow. In many cases, response time under 60 seconds and data retention of 12–36 months are more important to auditors than a marginal difference in catalog precision.

What auditors and technical reviewers usually expect

- A clear rationale for why each environmental parameter is monitored and how it affects product or test validity.

- Calibration and verification records tied to recognized references, with defined intervals such as 6 months, 12 months, or risk-based checks.

- Time-stamped data logs with secure retention, review responsibility, and escalation records for out-of-limit events.

- Evidence that corrective action includes containment, retesting, maintenance, or process adjustment rather than a simple alarm acknowledgment.

Standards are about defensibility, not paperwork volume

One frequent misunderstanding is that compliance means producing more reports. In reality, strong environmental monitoring reduces report burden because the system is already structured. When thresholds, calibration, data review, and CAPA links are predefined, an organization can answer audit questions in hours rather than days. That speed matters when customer visits, certification reviews, or incident investigations occur with little notice.

For distributors and project integrators, this is also a commercial issue. Buyers increasingly ask not only for sensors, analyzers, or monitoring nodes, but for documented deployment logic, commissioning support, and integration into quality management workflows. Suppliers who cannot support that wider requirement are often compared only on price.

The Hidden Cost of Poor Monitoring in Precision Operations

Environmental monitoring gaps create costs far beyond direct nonconformance. In precision manufacturing and R&D environments, the first visible symptom may be retest volume, scrap, unstable first-pass yield, or unexplained variation between shifts and sites. By the time a formal compliance issue appears, the organization may already have absorbed weeks of inefficiency.

Consider a production line using 3D scanning, machine vision, electrical test, and final dimensional verification. If temperature and humidity drift are not synchronized across these stations, one process may reject parts that another process would pass. The result is not only false rejection, but also damaged confidence in the entire inspection chain. In regulated supply chains, inconsistency between stations is often treated as a systems problem rather than an isolated equipment issue.

The commercial impact can spread across 3 levels. At the operational level, teams spend extra hours on troubleshooting and containment. At the quality level, deviation records and CAPA activity increase. At the business level, delayed shipments, customer complaints, and procurement re-evaluation may follow. Even when the root cause is “only” environmental instability, the downstream effect can influence contract renewals and approved supplier status.

Monitoring gaps are especially costly in environments where values are interpreted rather than simply read. Optical sensors, photonic systems, hyperspectral imaging platforms, and high-frequency measurement tools all depend on stable conditions for consistent signal interpretation. A 1–2% shift in baseline response may not trigger a hardware failure, but it can alter pass/fail decisions, spectral classification, or trend analysis.

Cost drivers that decision-makers often underestimate

The table below shows how environmental weakness affects cost structure in ways that are rarely captured in the original equipment purchase decision.

The conclusion is not that every site needs the most expensive monitoring platform. It is that environmental risk should be evaluated against the cost of invalid data, delayed decisions, and poor audit readiness. For many organizations, a well-structured monitoring upgrade pays back through fewer disruptions, not through dramatic utility savings.

How to Build an Audit-Ready Monitoring Framework

An audit-ready framework starts with mapping environmental variables to business-critical outcomes. Instead of asking, “What should we monitor?” ask, “Which conditions can change a measurement, test result, safety decision, or release judgment?” This approach works across industries because it aligns monitoring with risk, not with generic facility practice.

A strong implementation model usually follows 5 steps: risk classification, sensor architecture design, threshold definition, data governance, and response validation. Step 1 identifies where environmental conditions influence compliance-sensitive outcomes. Step 2 selects sensor types and placement logic. Step 3 defines action and warning levels. Step 4 secures records, review frequency, and retention. Step 5 confirms that alarms actually trigger usable action.

For technical teams, threshold setting is one of the most important tasks. A single upper and lower limit is often too simple. In many environments, it is better to use at least 3 levels: operating target, warning band, and action limit. For example, a room might target 20°C ±1°C, trigger warning at ±1.5°C, and require action at ±2°C. Similar logic can be applied to relative humidity, particle count, vibration, pressure differential, or trace-gas concentration.

Data governance is equally critical. Logging at 1-minute intervals may be justified for RF labs, sensitive optics, or contamination-controlled areas, while 5-minute or 15-minute intervals may be enough for general production zones. What matters is consistency with process sensitivity and the ability to reconstruct events over at least 12 months, and in many regulated contexts 24–36 months is preferable.

Recommended implementation checkpoints

- Define 3 categories of monitored areas: critical, controlled, and general, with different sensor density and review frequency.

- Use commissioning tests over 7–14 days to capture real drift, HVAC cycling, occupancy effects, and machine-generated heat.

- Verify alarm escalation through drills at least every 6–12 months rather than assuming notification equals response.

- Link monitoring records to maintenance, deviation handling, and test validity review in one documented workflow.

Selection criteria for buyers and evaluators

Procurement and project teams should compare monitoring solutions using at least 4 dimensions: metrological confidence, integration capability, serviceability, and regulatory usefulness. A device with strong accuracy but poor calibration support may create future gaps. A cloud dashboard without secure audit trails may look modern but fail quality review. A system with no spare sensor strategy may increase downtime despite a low purchase price.

For distributors and system integrators, the most competitive offers usually combine hardware, site survey input, commissioning, user training, and periodic verification support. This is especially relevant in facilities where environmental monitoring supports CMM rooms, electrical test benches, clean production cells, or multisensor inspection platforms at the same time.

Common Mistakes in Procurement, Deployment, and Daily Use

One of the biggest procurement mistakes is treating all environmental sensors as interchangeable. In reality, the right solution depends on measurement uncertainty needs, contamination sensitivity, facility layout, connectivity requirements, and response workflow maturity. Buying by unit price alone often leads to higher lifecycle cost within 12–24 months.

Another frequent error is under-scoping deployment. A facility may install one sensor per room because that fits budget, even though heat load, airflow, and occupancy vary significantly within the same area. In large production cells or laboratories with mixed equipment, 3–6 strategically placed sensing points may be more reliable than one premium device in the wrong location.

Daily use also matters. Operators need clear instructions on what to do when warnings appear. If the only instruction is “notify supervisor,” response quality will vary by shift and experience level. Standard operating procedures should define who pauses testing, who isolates product, who reviews trend history, and who authorizes restart. Without this discipline, alarms become background noise rather than compliance controls.

Finally, many organizations fail to revalidate monitoring after layout changes. Moving a CMM, adding a new oven, changing an HVAC diffuser, or enclosing a test area can alter airflow and temperature profiles within days. A practical rule is to reassess environmental mapping whenever a significant equipment, layout, or throughput change occurs.

Procurement checklist for lower compliance risk

- Check whether the supplier can specify accuracy, drift behavior, calibration support, and recommended recalibration interval.

- Confirm whether data export, audit logs, alarm records, and integration options match your quality and IT environment.

- Ask how the system performs under real installation conditions, not just ideal catalog conditions.

- Require a deployment plan covering placement logic, acceptance criteria, training, and response workflow.

FAQ for technical and purchasing teams

How often should environmental sensors be calibrated?

A common interval is every 12 months, but higher-risk applications may justify 6-month cycles or interim verification checks every quarter. The right schedule depends on drift history, process sensitivity, and how heavily the data supports release or compliance decisions.

What data logging interval is usually appropriate?

Critical test and inspection areas often need 1-minute to 5-minute intervals. General controlled areas may be adequately managed at 15-minute intervals. The key is proving that the interval can capture meaningful excursions before product, test, or safety impact occurs.

Which industries should prioritize environmental monitoring upgrades first?

The strongest business case usually appears in sectors with precision measurement, contamination sensitivity, electronics reliability, regulated testing, or advanced optical and sensing workflows. That includes semiconductor, aerospace, electronics, medical manufacturing, specialty labs, energy systems, and high-accuracy machining environments.

Turning Monitoring Data Into Actionable Compliance Control

The most mature organizations do not stop at collecting environmental data. They convert that data into trend analysis, root-cause investigation, maintenance planning, and sourcing decisions. This is where environmental monitoring becomes part of broader intelligent measurement strategy rather than a standalone facility function.

For example, recurring humidity warnings in one zone may reveal HVAC imbalance, door-opening frequency, material storage weakness, or inadequate sensor placement. Repeated temperature excursions during one shift may correlate with machine startup cycles or occupancy peaks. Once those patterns are understood, the site can redesign controls instead of repeatedly reacting to symptoms.

This data-driven view also strengthens procurement and capital planning. When decision-makers can show that 3 sites experience similar drift profiles, they can standardize sensor platforms, calibration service intervals, and response SOPs. That reduces variation across plants and improves supplier comparability. For large enterprises and channel partners, standardization across even 2–5 facilities can significantly simplify audits, spares, and training.

G-IMS-aligned thinking is especially relevant here because it connects environmental monitoring with broader precision ecosystems: advanced metrology, industrial optics, electrical test, machine vision, and specialized sensing. The practical value is not only better measurement hardware, but better decision logic from raw signal to compliant action.

What a stronger control model looks like

- Environmental data is reviewed by exception in real time and by trend on a weekly or monthly basis.

- Alarm records are tied to containment, investigation, and restart authorization instead of stand-alone notification.

- Calibration, verification, and sensor replacement are planned using risk and drift history, not only calendar reminders.

- Monitoring architecture is reviewed whenever process change, throughput increase, or equipment relocation occurs.

Environmental monitoring gaps become compliance trouble when organizations separate sensing from accountability. The fix is not simply more devices, but a more disciplined system: correct parameter selection, validated thresholds, traceable calibration, actionable alarms, and records that stand up to customer and regulatory scrutiny. For research teams, operators, quality leaders, technical evaluators, and procurement managers, that approach protects both measurement confidence and business continuity.

If your organization is reviewing monitoring architecture for precision production, test laboratories, inspection workflows, or multisite compliance readiness, now is the right time to benchmark current gaps against actual operational risk. Contact us to discuss a tailored evaluation, compare monitoring options, and explore solution pathways that support reliable measurement, stronger audit readiness, and smarter industrial decision-making.

Recommended News