Electrical Test Errors That Come From Fixture Design

Time

Click Count

Electrical Test accuracy often fails long before software or instruments do—at the fixture design stage. For teams working with Spectrum Analyzers, Industrial Sensors, and broader Sensory Technology platforms, small layout flaws can trigger unstable signals, false failures, and costly rework. This article explains how fixture design creates hidden test errors and how better decisions, aligned with IEEE Standards and NIST Standards, improve reliability, repeatability, and production confidence.

In high-mix manufacturing, R&D validation, and regulated quality environments, the fixture is not a passive accessory. It is part of the measurement system. When contact force, cable routing, grounding, shielding, connector wear, or mechanical alignment are overlooked, even a premium tester can return misleading results. For operators, that means unstable retests. For quality leaders, it means uncertain traceability. For procurement and project teams, it means hidden lifecycle cost.

Across electronics, aerospace, semiconductor support equipment, industrial automation, and sensor integration programs, fixture design errors often appear as test errors first and root causes later. Understanding where these errors originate helps organizations improve repeatability, reduce debug time, and make better fixture specifications before production ramps from pilot lots of 20–50 units to steady volumes of 500–5,000 units per month.

Why Fixture Design Directly Affects Electrical Test Results

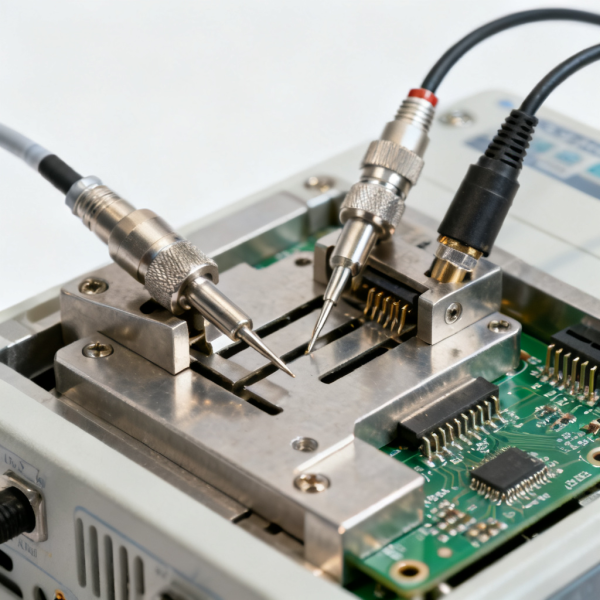

An electrical test fixture defines the physical path between the device under test and the instrument. That path adds resistance, capacitance, inductance, contact variability, and environmental sensitivity. At low frequencies, these effects may look minor. At higher frequencies, fast edge rates, or low-level sensor measurements, they become large enough to change pass/fail outcomes by measurable margins such as 1–3 dB, tens of milliohms, or timing shifts in the nanosecond range.

This is especially relevant when testing RF modules, cable assemblies, printed circuit boards, industrial transducers, and embedded sensing devices. A fixture with inconsistent impedance, poor return path control, or uneven contact pressure can generate reflections, noise pickup, and intermittent opens. Teams often blame software scripts or calibration drift first, yet the real source may be the mechanical and electrical interface created by the fixture.

In practical terms, a fixture should be treated as a controlled measurement component. If the instrument is specified to deliver repeatability within a narrow range, the fixture cannot be allowed to vary beyond that budget. Many organizations work with an internal allowance of 20%–30% of total measurement uncertainty for the fixture contribution alone, especially in critical acceptance testing and engineering validation.

For B2B buyers and technical evaluators, this means the fixture design review must happen before line release, not after yield loss appears. A 2-week design review and prototype iteration can prevent months of rework, repeated operator training, and supplier disputes over false rejects. In sectors where traceability and conformity matter, fixture decisions are also tied to documentation quality, maintenance intervals, and audit readiness.

Common error mechanisms introduced by fixture design

- Contact resistance drift caused by worn probes, oxidized interfaces, or insufficient force, often visible after 50,000–200,000 cycles depending on probe type and contamination level.

- Signal integrity distortion from excessive lead length, poor impedance control, and loop area expansion, which can be critical above 100 MHz and increasingly severe into the GHz range.

- Grounding and shielding errors that allow EMI ingress or create ground loops, producing unstable readings in low-voltage or high-frequency measurements.

- Mechanical misalignment that changes pin engagement depth, connector seating, or optical/electrical positioning from one test cycle to the next.

Typical symptom-to-cause relationship

The table below helps cross-functional teams identify whether recurring test instability is likely coming from fixture design rather than from the instrument, software, or device itself.

The key conclusion is simple: when symptoms are inconsistent, intermittent, or station-specific, the fixture should be audited early. That audit should cover mechanics, electrical path design, maintenance history, and setup instructions, not just instrument calibration status.

The Most Frequent Fixture Design Mistakes in Production and Validation

The most common fixture failures are not dramatic design flaws. They are small compromises that seem acceptable during prototype build but become expensive in volume production. A cable that is 150 mm longer than necessary, a probe block with uneven planarity, or a ground strap placed for convenience instead of path control can each create measurement spread large enough to trigger false decisions.

One major mistake is designing for nominal geometry rather than worst-case tolerance stack-up. If the device under test varies by ±0.2 mm in one axis and the fixture also varies by ±0.15 mm, the combined offset may exceed acceptable contact window in edge connectors, spring probes, or precision sensor interfaces. The result is repeatability loss that appears only in certain lots, operators, or environmental conditions.

Another frequent mistake is ignoring service life. Fixture performance changes over time. Probe tips wear, hinge mechanisms loosen, insulators collect residue, and coaxial connectors degrade with repeated mating. If a design cannot be inspected or serviced within a 10–20 minute maintenance window, production teams delay upkeep, and measurement quality degrades gradually until failures become visible in yield reports.

A third mistake is separating electrical and mechanical decisions. The fixture may look mechanically robust but still fail electrically because of uncontrolled loop area, insufficient shielding, or poor isolation between power and signal lines. In mixed-signal or sensor test environments, these oversights can distort analog readings, digital timing, and RF response at the same time.

High-risk design oversights

- Using generic connector placement without modeling insertion path, strain relief, and repeated mating wear.

- Allowing floating grounds or multiple inconsistent return paths in fixtures intended for sensitive analog or RF measurements.

- Routing signal and power conductors in parallel over long sections, increasing coupling and noise susceptibility.

- Failing to define maintenance triggers such as cycle count, contact resistance threshold, or visible wear criteria.

- Skipping correlation checks between engineering fixtures and production fixtures before volume launch.

Why these mistakes survive early testing

Early builds often use small sample sizes of 5–20 units, experienced operators, and controlled lab conditions around 22–25°C. Under those conditions, marginal fixture designs can appear stable. Once the process moves to multi-shift operation, varied ambient conditions, and hundreds of insertions per day, previously hidden error sources become visible. That is why fixture qualification should include cycle testing, correlation studies, and environmental variation, not only initial functional checks.

For sourcing teams, this also means the lowest initial fixture quote may not represent the lowest ownership cost. A fixture that requires weekly adjustment, causes even a 1% false-fail rate, or extends debug time by 3–5 minutes per unit can create much higher downstream cost than a better-engineered option with clearer service documentation.

How to Design Fixtures for Repeatability, Correlation, and Lower Measurement Uncertainty

A robust fixture design starts by allocating an error budget. Teams should define how much uncertainty can be introduced by the fixture relative to the total measurement objective. For example, if a process requires tight correlation across 3 stations, the fixture contribution must be controlled through contact quality, stable geometry, and consistent electrical path design. This is where alignment with recognized engineering practice and traceable measurement thinking becomes critical.

For RF and high-frequency work, the fixture should minimize discontinuities and keep path lengths as short as practical. Controlled impedance transitions, appropriate coaxial interfaces, and careful ground reference design reduce reflections and improve repeatability. For low-level industrial sensor signals, shielding, guarding, isolation, and low-noise cable routing may matter more than raw mechanical stiffness alone.

Mechanical consistency is equally important. Use repeatable locators, defined stop surfaces, and materials chosen for dimensional stability across the expected thermal range. In many industrial settings, a fixture may see ambient conditions from 18°C to 30°C, repeated opening cycles, vibration, and operator handling. Materials, hinge design, and wear surfaces must be chosen with that reality in mind.

Documentation completes the design. A fixture that works only when a senior engineer sets it up is not production ready. Build records, service intervals, torque values, connector replacement criteria, and verification steps should be written so that operators, quality teams, and field support personnel can follow the same method across sites or contract manufacturers.

Recommended design controls

The following framework helps engineering, quality, and procurement teams evaluate whether a fixture design is mature enough for sustained use.

A practical takeaway from this comparison is that repeatability does not come from one feature alone. It is the result of coordinated electrical, mechanical, and maintenance controls. If one of those three is weak, long-term correlation usually suffers.

A 5-step implementation sequence

- Define the measurement objective and allowable uncertainty before mechanical design begins.

- Review path length, grounding, shielding, and connector strategy with both test engineers and fixture designers.

- Prototype the fixture and run 30–100 cycle checks to expose contact and alignment variation early.

- Perform station-to-station correlation and operator variation studies before release.

- Publish maintenance limits, replacement intervals, and verification steps as controlled documents.

What Buyers, Quality Teams, and Project Leaders Should Check Before Approving a Fixture

Procurement decisions often focus on lead time and price, but fixture selection should also address repeatability, maintainability, and cross-site consistency. A fixture used in engineering validation may need different features than one intended for 24/7 production. Decision-makers should ask whether the proposed design supports service access, documented replacement parts, and measurable verification criteria rather than relying on vendor promises alone.

Quality managers should verify whether the fixture can support traceable workflows. That includes documenting setup state, defining acceptance checks, and confirming that maintenance actions restore the fixture to a known condition. For project managers, the key question is whether the fixture can scale from pilot to volume without requiring complete redesign after 1 or 2 months of use.

Distributors, system integrators, and agents should pay attention to after-sales support expectations. If a fixture contains consumables that need replacement every 3 months, support kits, spare plans, and service response time should be discussed before rollout. This is particularly important in international deployments where shipping delays can stop a line for several days.

For organizations benchmarking solutions against recognized metrology and measurement practice, it is wise to request a clear validation package: initial correlation results, maintenance guidance, electrical path description, and verification recommendations tied to the actual application. That package reduces disputes between engineering, operations, and suppliers later.

Pre-approval checklist for fixture procurement

Before issuing a purchase order or releasing a build to production, teams can use the following checklist to structure technical and commercial review.

This checklist shows that technical validation and commercial approval should happen together. A fixture with a short quoted lead time but weak maintenance access or incomplete verification data can create larger downstream risk than a slightly slower but better-documented option.

FAQ: Practical Questions About Fixture-Driven Test Errors

How can teams tell whether the test fixture is the real problem?

Start with repeat tests across different fixtures, operators, and stations. If the same device shows inconsistent results only on one setup, the fixture is a strong suspect. Also review cycle count, connector wear, contact resistance behavior, and whether readings change when cables are moved or the unit is reseated. These are classic signs of fixture-driven instability.

How often should a production fixture be verified or maintained?

There is no single universal interval, but many teams use a mixed trigger approach: daily visual checks, weekly functional verification, and scheduled maintenance based on cycle count such as every 10,000, 50,000, or 100,000 insertions depending on the contact system. High-frequency and low-level signal fixtures often need tighter controls than simple continuity fixtures.

Are fixture errors only a concern in RF testing?

No. RF systems make fixture weaknesses easier to see, but low-voltage sensor interfaces, mixed-signal boards, battery management circuits, cable harnesses, and industrial I/O assemblies can all suffer from contact variation, grounding problems, and mechanical misalignment. The risk exists anywhere the fixture becomes part of the measurement path.

What is the most overlooked buying criterion?

Serviceability is often underestimated. A fixture that takes 45 minutes to disassemble for a probe change may be technically acceptable on paper, but in real production it encourages delayed maintenance. Designs that allow critical consumables to be inspected and replaced in 10–15 minutes are generally easier to sustain without measurement drift.

Fixture design errors are one of the most preventable causes of electrical test instability. When organizations treat the fixture as part of the measurement system, they gain better repeatability, lower false-fail risk, stronger station correlation, and clearer lifecycle control. That matters to engineers running validation, operators maintaining output, quality teams protecting traceability, and buyers managing total cost.

For companies evaluating electrical test, spectrum analysis, industrial sensor validation, or broader sensory-technology workflows, a disciplined fixture strategy can improve confidence long before software tuning or instrument upgrades are considered. If you need support comparing fixture requirements, reviewing measurement risk, or aligning test setups with practical benchmark criteria, contact us to discuss your application, request a tailored solution, or learn more about implementation options.

Recommended News