Automated Vision Inspection Systems and False Reject Costs

Time

Click Count

Automated vision inspection systems can reduce escapes and speed up quality decisions, but false rejects often become the hidden cost center that undermines those gains. When a good part is incorrectly flagged as defective, the result is not just scrap or rework—it can trigger line stoppages, extra operator checks, lower throughput, and unnecessary friction between production and quality teams. For companies evaluating 3D vision inspection systems, advanced metrology solutions, and 3D scanning for quality control, the key question is not whether automation works, but how to control false reject rates without weakening defect detection.

For information researchers and inspection operators, the core search intent behind this topic is practical: what causes false rejects in automated vision inspection, how much do they really cost, and how can they be reduced in production? The most useful answer is not a generic definition of machine vision, but a decision-focused view of root causes, business impact, troubleshooting logic, and system-selection criteria. In most cases, false rejects are not caused by one issue alone. They usually result from a combination of lighting instability, part variation, tolerance misalignment, image-processing logic, fixture inconsistency, or poor handoff between vision data and quality standards.

Why false rejects matter more than many teams expect

A false reject happens when the inspection system classifies an acceptable part as nonconforming. On paper, this may look like a minor calibration issue. In practice, it can create a chain of cost that is far larger than the price of the rejected part itself.

The most common cost areas include:

- Yield loss: Good parts are removed from the flow, reducing first-pass yield.

- Manual re-inspection labor: Operators or quality technicians must verify flagged parts.

- Reduced line throughput: Frequent rejects slow production and create bottlenecks.

- Unnecessary rework or scrap: Parts may be reworked even when they were originally acceptable.

- Operator distrust: Teams begin to bypass or question the inspection system.

- Customer risk: When confidence in inspection logic drops, process discipline often drops with it.

For high-volume manufacturing, even a small false reject rate can become expensive very quickly. If a line runs tens of thousands of units per shift, a 1% false reject rate may translate into hundreds of unnecessary interventions per day. In sectors with tight tolerances—such as electronics, aerospace components, medical devices, and precision assemblies—the indirect cost is often greater than the direct cost because engineering time, containment activity, and production scheduling are affected.

What usually causes false rejects in automated vision inspection systems

False rejects rarely come from “bad software” alone. They are usually symptoms of mismatch between the real production environment and the assumptions built into the inspection setup.

1. Lighting variation

Lighting is one of the most common causes. Changes in intensity, angle, reflection, ambient interference, or part surface response can make a good feature appear defective. Glossy, translucent, reflective, or textured materials are especially sensitive. If illumination is not stable, image thresholds become unstable too.

2. Part presentation inconsistency

Vision systems depend on repeatable positioning. If the part arrives with slight rotational change, tilt, height shift, or fixture variation, the same feature can look different from one cycle to the next. In 2D inspection, this is particularly problematic because depth-related changes can distort edge detection and dimensional interpretation.

3. Tolerance logic that does not match manufacturing reality

Some systems are configured around ideal CAD geometry or narrow pass/fail windows without enough allowance for acceptable process variation. When inspection thresholds are tighter than the engineering specification—or tighter than the process can realistically hold—false rejects rise immediately.

4. Weak image-processing strategy

Rule-based tools can fail when feature contrast changes, when contamination is present, or when real-world part appearance is more variable than expected. Edge tools, blob analysis, pattern matching, and thresholding all have limits. If the algorithm is brittle, the reject rate may increase even when defect conditions have not changed.

5. Inadequate training data in AI-enabled systems

AI-based classification can improve robustness, but only if trained on representative data. If the model has seen too few examples of acceptable part variation, it may over-classify normal parts as defects. This is a common issue when datasets are too clean, too small, or not regularly updated.

6. Poor correlation between vision inspection and metrology reference

If the automated vision inspection system is not validated against a trusted reference such as calibrated advanced metrology solutions or higher-accuracy offline measurement, teams may not know whether the system is rejecting correctly. This creates an argument between production and quality instead of a traceable measurement process.

How to calculate the real cost of false rejects

Many companies underestimate false reject cost because they look only at scrap. A better calculation includes both direct and indirect effects.

A practical framework includes:

- Cost per good part removed

- Operator handling time per reject

- Quality technician verification time

- Line slowdown or stoppage cost

- Rework cost

- Scrap cost from unnecessary disposition

- Engineering troubleshooting cost

- Lost production opportunity

A simple formula can look like this:

Total False Reject Cost = (False Reject Quantity × Part Value Impact) + Manual Review Cost + Throughput Loss + Rework/Scrap Cost + Engineering Support Cost

For example, if a production line falsely rejects 300 parts per day, and each event creates an average combined impact of labor, delay, and handling worth $8, that is $2,400 per day. Over a 250-day production year, that becomes $600,000. In many operations, this number is high enough to justify better lighting design, fixture redesign, algorithm improvement, or migration from 2D vision to 3D vision inspection systems.

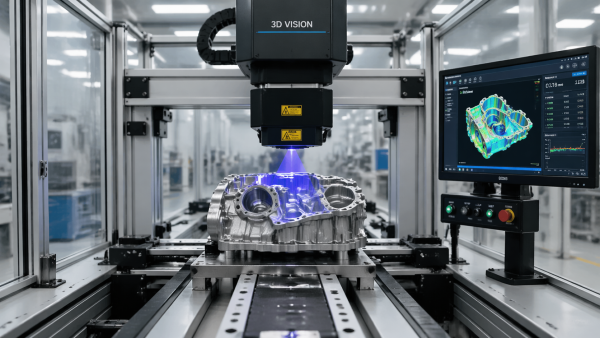

When 3D vision inspection systems can reduce false rejects

Not every false reject problem requires a 3D system. But when inspection failure is caused by height variation, orientation instability, part warpage, feature depth, or complex geometry, 3D inspection often provides a more reliable basis for decision-making than 2D images alone.

3D vision inspection systems are especially useful when:

- Feature acceptability depends on height, volume, flushness, coplanarity, or profile

- Part surface finish causes unstable 2D contrast

- Shadowing or reflection affects edge-based decisions

- Assembly verification requires depth confirmation

- Positional variation makes 2D measurements inconsistent

By capturing depth information, 3D systems can separate true defects from appearance changes that do not affect conformance. This often reduces false rejects in applications such as adhesive bead inspection, connector pin inspection, weld verification, gap-and-flush analysis, molded-part profile checks, and battery assembly inspection.

However, 3D does not automatically solve everything. If the root issue is unstable fixturing, poor tolerance setting, or a bad pass/fail strategy, adding 3D hardware alone may simply produce more complex data without improving decisions.

How advanced metrology and 3D scanning help validate inspection logic

When teams repeatedly argue over whether the vision system is “too strict” or “not accurate enough,” the best next step is often independent measurement validation. This is where advanced metrology solutions and 3D scanning for quality control become valuable.

These tools help in three critical ways:

- Reference truth: They provide higher-confidence measurement for disputed features.

- Tolerance alignment: They reveal whether the vision system’s pass/fail thresholds match engineering requirements.

- Root-cause isolation: They show whether the problem comes from optics, part presentation, part variation, or system programming.

For example, if a vision system rejects a feature based on edge location, a 3D scan may show that the feature is within dimensional tolerance but visually inconsistent due to material texture. In that case, the issue is not part quality—it is inspection strategy. Likewise, metrology validation can uncover the opposite: some “false rejects” are actually true process warnings that were previously misunderstood.

In mature quality environments, automated vision should not operate as a standalone judgment engine. It should be part of a measurement ecosystem with traceable reference methods, gauge correlation studies, and periodic revalidation.

What operators and engineers should check first when false rejects increase

For users and operators, fast troubleshooting matters. When false rejects suddenly rise, the most effective response is a structured check rather than immediate threshold widening.

Start with the following sequence:

- Confirm whether the rejected parts are truly good using a trusted manual or metrology reference.

- Check lighting stability for drift, contamination, aging, or ambient interference.

- Inspect fixturing and part positioning for looseness, wear, vibration, or offset.

- Review recent recipe changes including thresholds, regions of interest, model updates, or software patches.

- Compare current images with known-good historical images to identify visual shifts.

- Segment rejects by defect type, product variant, shift, and machine to locate patterns.

- Verify camera focus, lens cleanliness, and exposure settings.

- Check upstream process changes such as material lot, coating variation, molding condition, or assembly alignment.

This structured method prevents a common mistake: loosening inspection sensitivity too quickly. If teams reduce sensitivity just to lower false rejects, they may increase false accepts and ship defects. The objective is not fewer rejects at any cost; it is better discrimination between conforming and nonconforming parts.

How to evaluate a vision inspection system for lower false reject risk

For buyers and researchers comparing systems, a strong vendor demo is not enough. The real question is how well the solution performs under production variation, not under ideal conditions.

Key evaluation criteria include:

- Measurement repeatability and reproducibility under actual plant conditions

- Sensitivity to lighting, surface finish, and orientation changes

- Tolerance setup flexibility aligned to real process capability

- Support for multi-feature decision logic rather than single-threshold pass/fail

- Correlation with external metrology

- Operator usability and recipe control

- Data logging for reject analysis

- AI model governance if machine learning is used

Ask vendors for evidence from applications with similar materials, defect types, cycle times, and tolerance classes. If possible, run a pilot using your own production samples, including known good parts with natural variation—not just obvious defects. This is the best way to estimate false reject behavior before full deployment.

Best practices to reduce false rejects without missing real defects

The most effective quality teams treat false reject reduction as a system-improvement project, not a one-time tuning exercise.

Best practices include:

- Design lighting and optics around the actual surface condition of the part

- Improve fixturing and part presentation before changing software logic

- Use golden samples carefully, but also include acceptable variation ranges

- Validate thresholds against engineering tolerances and process capability

- Apply multi-stage decisions, such as auto-pass, auto-fail, and review zone logic

- Use 3D scanning for quality control or offline metrology to confirm disputed results

- Monitor false reject trends by SKU, shift, station, and material lot

- Retrain AI models with representative edge cases when needed

- Document change control for recipes, optics, illumination, and models

In many factories, the biggest improvement does not come from buying the most advanced hardware, but from building a disciplined validation workflow around the system already in place.

Conclusion: the goal is not just automated inspection, but trustworthy inspection

Automated vision inspection systems deliver value only when their decisions are reliable enough to support production, quality, and customer requirements at the same time. False rejects are costly because they damage more than yield—they weaken trust in the inspection process and blur the line between real defects and process noise.

For teams evaluating automated vision inspection systems, the right approach is to look beyond detection speed and image resolution. Focus on false reject behavior, measurement correlation, tolerance logic, part variation, and validation against advanced metrology solutions or 3D vision inspection systems where appropriate. If the system can distinguish real defects from normal variation consistently, it becomes a true quality asset rather than a source of hidden operational cost.

In short, reducing false rejects is not only a technical tuning task. It is a strategic quality decision that directly affects throughput, labor efficiency, cost control, and confidence in zero-defect manufacturing.

Recommended News